SoK: Consensus in the age of blockchains Bano et al., arXiv 2017

There are so many things to consider when evaluating a blockchain based technology / system. For example (and in no particular order): What cryptographic building blocks does it depend on? Does it offer privacy of identity (anonymity)? Does it offer privacy of data? Is data stored on chain or off chain? (I.e., separation of control plane and data plane). Is data recoverable by any participant at any time, or does the system just offer non-repudiation? What are the incentives for participants? What is the overall system throughput? What is the expected latency? What security guarantees does it provide? What is the threat model, and hence what attacks is the system open to? Related, what degrees of freedom do participants have? Who or what do you need to trust, and with what? How efficient is the system? Are smart contracts or other trusted application logic mechanisms supported? What is the consistency model (e.g., forks etc.)? Can fraudulent activity / participants be detected? How is system state recovered (if at all) if attacks are discovered after-the-fact? How fast does the blockchain storage requirement grow? How quickly can new participants bootstrap? What is the consensus model? And so on… this was just off the top of my head as I sit here in the train, I’m sure I missed a few – let me know!

These parameters are inter-twined of course, and of all of them, one of the most fundamental is the choice of consensus model, which has major impacts on many of the other design points. We’ve touched quite a few parts of the elephant in previous editions of The Morning Paper (see blockchain related posts here), but today’s choice is a recent and very helpful ‘SoK’ (Systematisation of Knowledge) looking at the variety of consensus mechanisms out there. (I’ve peppered this post with links to previous systems we’ve looked at where applicable).

The inherent complexity of consensus protocols and their rapid and dramatic evolution makes it hard to contextualize the design landscape. We address this challenge by conducting a systematic and comprehensive study of blockchain consensus protocols.

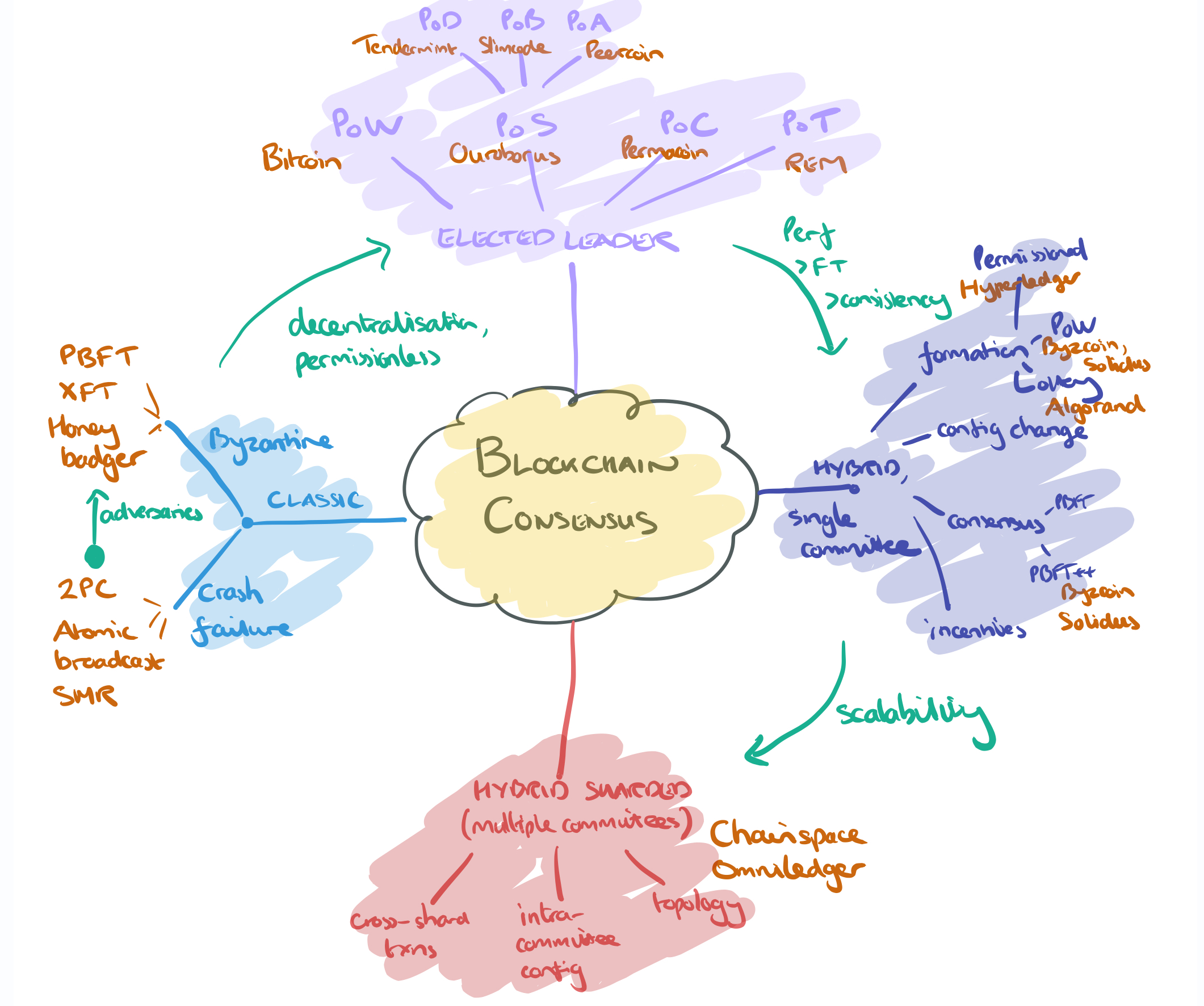

I drew myself a picture to get the broad sweep:

(Enlarge).

In this model approaches to consensus are divided into four main buckets:

- The classical consensus models, the predecessors (and in some cases building blocks) of blockchain consensus. Here we can think about classic protocols such as 2PC, atomic broadcast, and state machine replication. I previously wrote a mini-series of posts on classic consensus algorithms. Moving from the crash failure model (honest nodes that may fail, but not deliberate act maliciously) to the Byzantine failure model allows us to tolerate a fraction of malicious / adversarial participants. Here we find protocols such as PBFT, XFT, and Honey Badger. (And then there are mixed mode systems such as Hybster).

- Moving beyond the classical models and their offshoots, we have fully decentralised, permissionless networks. The first generation of systems use a probabilistic leader election process (e.g., who can find the hash first?). The best known and most widely deployed variation is proof-of-work, but there are others such as proof-of-stake (value held on the chain, or variants proof-of-deposit, proof-of-burn, proof-of-coin-age), proof-of-capacity, and proof-of-elapsed time (spent on useful work).

- “A single consensus node suffers from poor performance as well as safety limitations such as weak consistency and low fault-tolerance…” Hence we have hybrid consensus models that use one consensus system(e.g. PoW) to form a committee, and then have the committee use another consensus protocol (e.g. PBFT and its derivatives) within the committee to agree on blocks.

- For further scalability, we can divide the system into shards, which means multiple committees (one per shard), and introduces the question of cross-shard agreement and transactions.

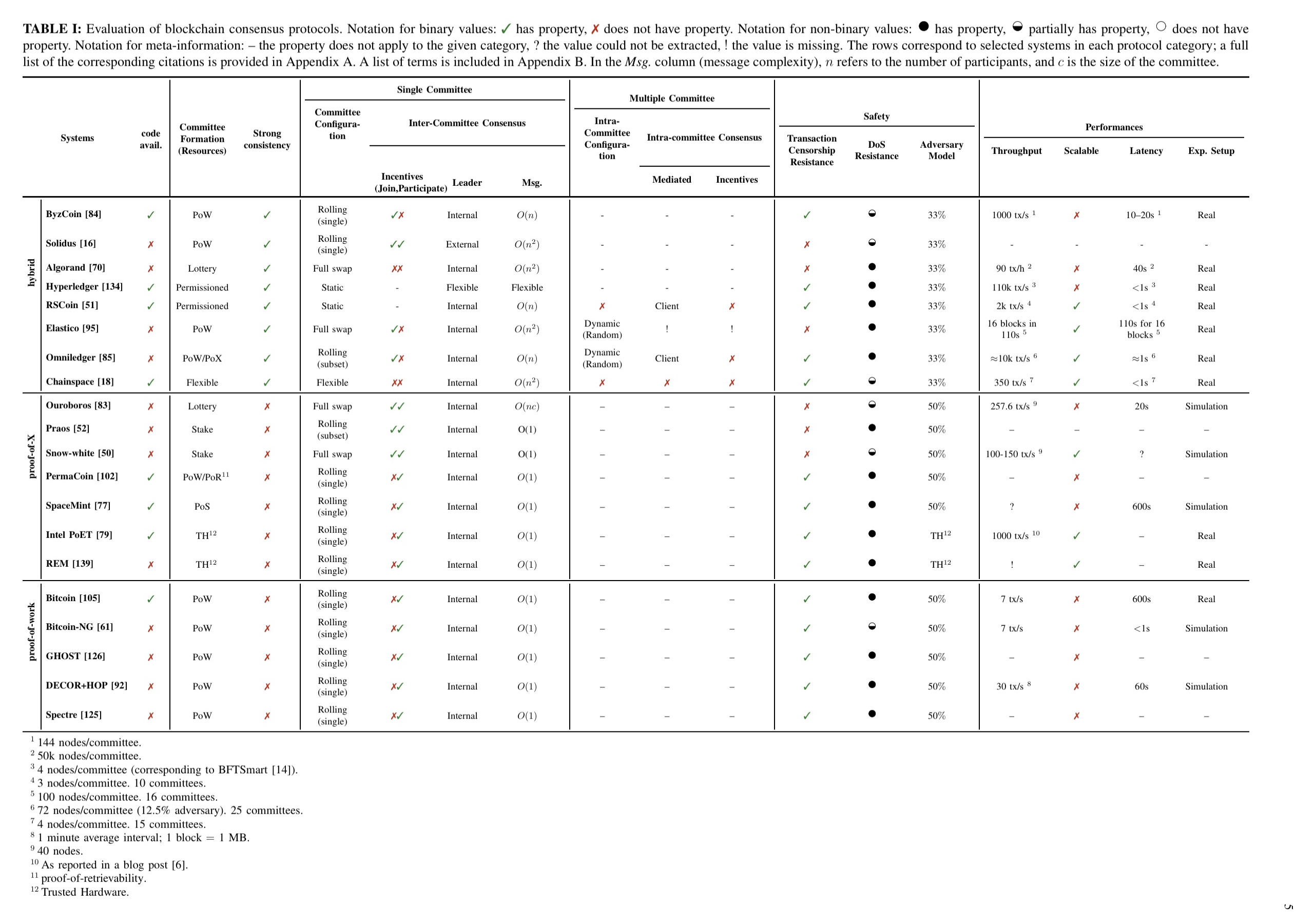

The main table in the paper evaluates a number of systems along these dimensions (and a few others). Click on the image for a larger view.

(Enlarge ).

Let’s take a deeper look at each of the three post-classical categories in turn.

Probabilistic single leader Proof-of-X systems

The best known and most widely deployed mechanism is of course proof-of-work (aka. Nakamoto consensus). Forks can occur, and are resolved by PoW consensus, which amounts to picking the chain with the most accumulated work. The threat model assumes that an adversary that has the majority of the computing power on the network (a 51% attack), can outpower the remaining participants in generating a chain with the most accumulated work.

The challenge of scaling PoW blockchains is that the more one increases throughput (block size) or the more one decreases latency (block frequency), the lower the resilience of the network to 51% attacks.

Variations includes separating leader election from transaction serialization (Bitcoin-NG), allowing concurrency mining by replacing the linear chain with a DAG (Spectre), and off-chain approaches such as the Lightning Network where transactions are executed off the main consensus path. (“A more detailed discussion of off-chain solutions is outside the scope of this work.”)

Mining pools concentrate power. SmartPool implements a decentralized mining pool, others try to discourage mining by e.g. using non-outsourceable proof-of-work puzzles. Related is selfish mining which allows colluding miners to generate more valid blocks than their computing power would normally allow them to.

The security of Nakamoto consensus relies on economically incentivising miners to validate and mine blocks, by rewarding them with new coins. However, previous work has shown that Nakamoto consensus is not completely incentive compatible…

The power-intensive PoW in Nakamoto consensus has no external utility, leading to alternate proof-of-X proposals. In proof-of-stake, participants vote on new blocks weighted by their in-band investment (e.g., amount of currency held in the blockchain). One challenge here is keeping track of the changing stakes of the stakeholders. Compared to PoW, three new attacks become possible:

- The nothing at stake attack where miners are incentivised to extend every potential fork

- The grinding attack where a miner re-creates a block multiple times until it is likely they can create a second block shortly afterwards.

- The long-range attack in which an attacker can bribe miners to sell their private keys (and possibly enable an attacker to mine previous blocks and rewrite history). “This is made possible because the bribed miners have already received their external utility for these coins… and no longer have a stake in the system.”

Variations include proof of deposit (miners lock a certain amount of coins which they cannot spend while mining), proof of burn (miners prove they have destroyed a quantity of coins), and proof-of-coin-age (miners show possession of a quantity of coins, weighted by coin age).

In proof of capacity participants vote on new blocks weighted by their capacity to allocate a non-trivial amount of disk space (e.g. PermaCoin). Proof of capacity is vulnerable to centralization though.

In proof of elapsed time the trusted enclave in Intel SGX (or equivalent) is exploited to generate a random wait time and prove the miner waited at least that long. Proof of useful work proposes computing a useful PoW using trusted hardware (e.g. REM).

Hybrid consensus with a single committee

A single consensus node suffers from poor performance as well as safety limitations such as weak consistency and low fault-tolerance. This has resulted in a shift towards consensus protocols where a committee – rather than a single node — collectively drives the consensus.

Now we have a whole bunch of new questions: How do we decide who’s on the committee? How do we rotate committee membership over time? How do we reach consensus among committee members? And what do committees mean for incentives?

There are three basic approaches to committee formation: (i) permissioned systems such as Hyperledger where nodes are granted committee membership based on some external policy, (ii) using proof-of-work do decide which nodes join the committee (e.g. ByzCoin, Solidus, Omniledger), and (iii) using a cryptographic lottery (e.g. Algorand).

For rotating committee membership there are again several schemes from “you don’t” — i.e., the committee is essentially static — to rolling membership changes with single or multiple members being replaced each time, or even (probable) full replacement using lottery-based systems.

Most committee-based systems use classical BFT consensus protocols such as PBFT.

ByzCoin organises the consensus committee into a communication tree, replacing PBFTs MAC-authenticated all-to-all communication with an primitive called scalable collective signing (CoSi) that reduces messaging complexity to

.

Different schemes also offer differing levels of protection and DoS attacks (taking all honest members of the committee offline). Algorand has a neat solution to this because committee members are not published or notified, but instead learn whether they are on the committee via the output of a verifiable random function. Thus an adversary does not learn who is on the committee until it is too late.

Regarding incentives:

Classical BFT protocols assume two kinds of players: cooperative and byzantine. This assumption works well in centralized settings where nodes are controlled by the same entity or federation. However, decentralized networks that rely on volunteer nodes need to provide incentives for participation. Most committee-based systems such as ByzCoin use the same incentive model as Bitcoin; however, instead of the most recent miner receiving all reward and fee, it is shared between members of the committee in proportion to their shares.

Solidus includes incentives for information propagation and presents a game-theoretic analysis that a miner’s best strategy is to propagate the PoW puzzle and charge a small fee. Smart contract platforms may require clients to include fees to be paid to nodes that execute the contracts.

Sharded hybrid consensus using multiple committees

While single-committee consensus significantly improves performance over single-node consensus, a major limitation is that it is not scalable: adding more members to the committee decreases throughput. This motivated the design of consensus based on multiple committees. To make the system scalable transactions are split among multiple committees (shards) which then process these transactions in parallel.

We looked at Chainspace and Omniledger last week that both use this model. With multiple committees, there are questions of how to arrange the topology of committees. Chainspace and Omniledger have a flat topology, Elastico using a hierarchy. In addition to choosing which nodes are in committees, we now have to decide on an assignment of nodes to committees – which can be done statically or dynamically.

Some transactions may span shards (committees), leading to a need for intra-committee consensus. Atomic protocols may be driven by the client or by shard leaders.

In closing…

We are at a crucial point in the evolution of blockchains. The major hurdle in the widespread adoption of blockchains is their performance and scalability — while improvements have been made, they are nowhere near as ubiquitous as their traditional counterparts. These properties are deeply related to the consensus protocol — and we believe this is where future efforts to improve blockchain performance and scalability should be concentrated.

Probabily more than one person already asked you this very question, so forgive me for the repetition: how do you create the drawings for your posts?

I use an app called Notability on an iPad Pro, and draw them with the stylus…

Got it, thanks. BTW, keep up the great work here and elsewhere!

Why is Proof of Capacity vulnerable to centralization? Burst (which is btw., the only currently existing coin using PoC afaik) is mined with HDDs and they argument goes that, as HDDs can be easily bought by anyone, PoC is at least less prone to centralization than PoW, assuming PoW is done with specific ASIC miners, leading to centralization due to higher economies of scale for buying the hardware.