vCorfu: A cloud-scale object store on a shared log Wei et al., NSDI’17

vCorfu builds on the idea of a distributed shared log that we looked at yesterday with CORFU, to construct a distributed object store.

We show that vCorfu outperforms Cassandra, a popular state-of-the-art NoSQL store, while providing strong consistency (opacity, read-own-writes), efficient transactions, and global snapshots at cloud scale.

(It doesn’t outperform ScyllaDB though!)

In addition to CORFU (and a system called Tango which uses CORFU to create distributed shared data structures in C++), vCorfu also borrows a core idea from Replex (which we looked at on The Morning Paper towards the end of last year): we want multiple copies of the data for redundancy, but those replicas don’t all have to store the data in the same way… vCorfu combines a distributed shared log as in CORFU, with materialized streams that store replicas of object-specific subsets of the log data (one stream per object). That turns out to be interesting because the vCorfu object store uses state machine replication to reconstruct the state of an object by replaying the log. In a traditional shared log system, you’d have to replay the whole log, which would of course include updates for many objects beyond just the one of current interest. But with a materialized stream we can be much more efficient because it contains just the updates for the object of interest. Furthermore, we’ve still got a single distributed shared log underpinning everything, so we can still use that for multi-object transactions and so on.

Like other shared log systems, vCorfu supports strong consistency, linearizable reads and transactions, but the locality advantages of materialization enable vCorfu to scale to thousands of clients.

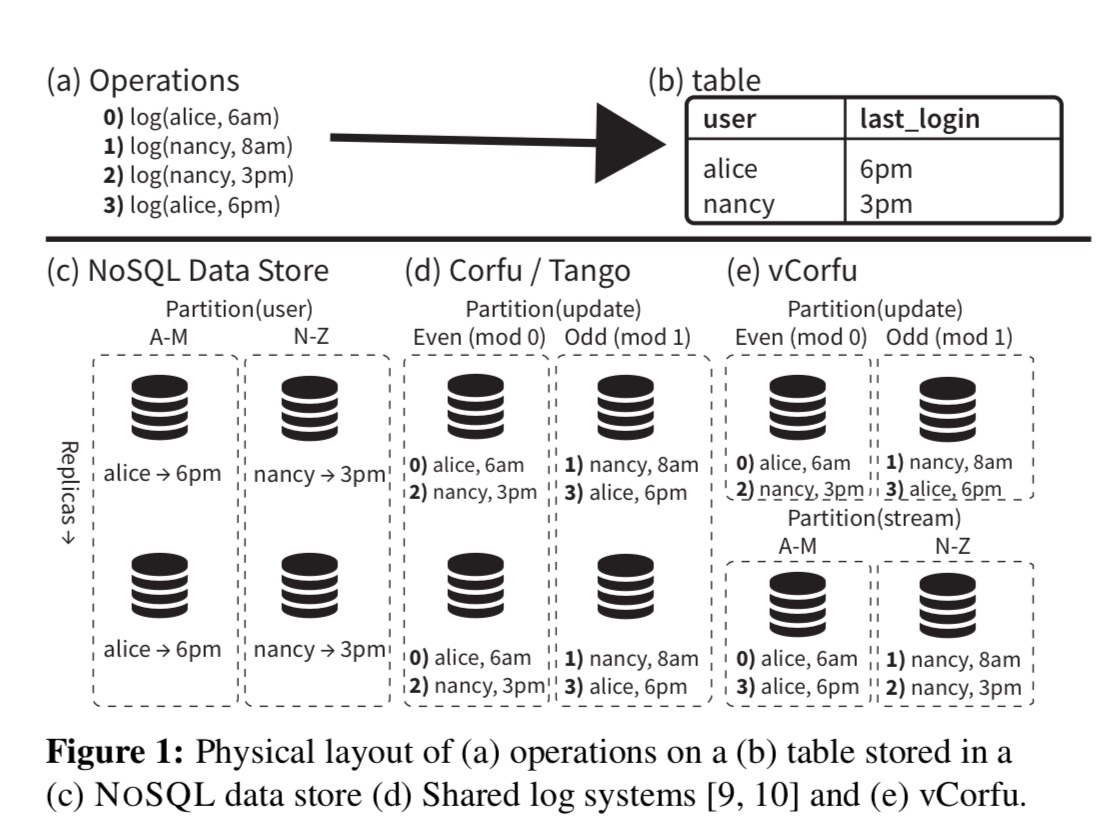

Here’s the pictorial summary of the big idea for an imagined system tracking the last login time of users:

- (a) shows a sequence of operations for two users, alice and nancy

- (b) shows the logical table we’d like to present to clients with most recent login for each user

- (c) illustrates a NoSQL store partitioning scheme which partitions state, each partition having its own replica(s).

- (d) Shows how it might look with a shared log system such as Corfu, partitioning log ranges and then replicating those log partitions

- (e) Shows the vCorfu approach – there is a shared log partitioned by log range as in Corfu, but the ‘replicas’ are materialized streams on a per-object basis, not direct copies of the log partition.

The underlying stream store

Like Corfu, vCorfu uses a sequencer to issue log address tokens, and a client-side mapping (now called a layout) describing how offsets in the global log or in a given materialized stream map to replicas. As with Corfu, vCorfu moves from one configuration (layout) to another using epochs, sealing, and a Paxos-based protocol to ensure all replicas agree on the current layout.

Unlike Corfu, the sequencer issues tokens for both the global log and for individual materialized streams.

vCorfu writes data in the form of updates to both log replicas and stream replicas, each of which are indexed differently. This design replicates data for durability, but enables access to that data with different keys, similar to Replex.

Recovery in vCorfu is handled in a similar manner to Replex, and hence vCorfu can tolerate failures so long as a log replica and stream replica do not fail simultaneously. A stream replica can always be constructed by scanning through the aggregate of the log replicas. Likewise, a log replica can be reconstructed by scanning all stream replicas.

To perform a write, the client writes to the log replica first, then to the stream replica. If a replica previously accepted a write to a given address, the write is rejected and the client must retry with a new log token… Replicas will only serve reads for committed data.

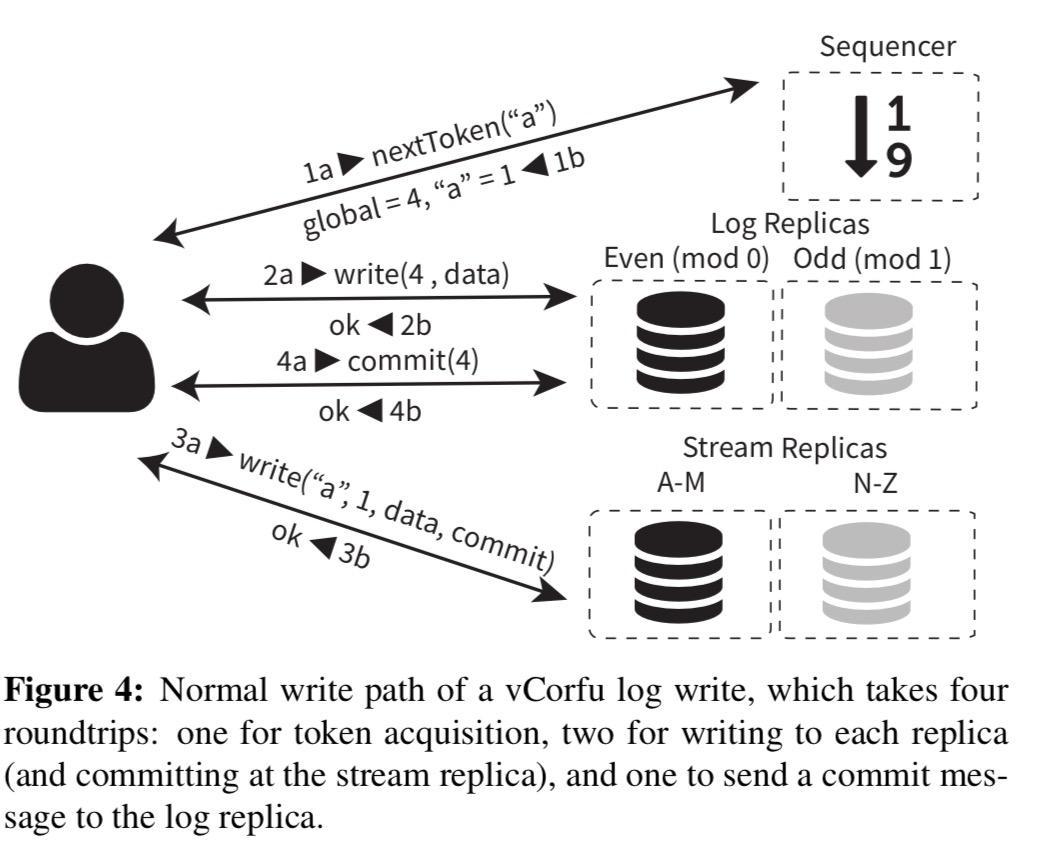

It does however take four roundtrips in normal operation to write an update:

Writes to multiple streams can happen atomically, with the resulting write ordered in the global log by a single log token, but with multiple stream tokens.

There are additional reasons beyond multi-object atomicity to keep the shared log as well as the materialised streams:

- the global log is a convenient scalable mechanism to obtain a consistent snapshot of the entire system (for example, to support backups or long-running read-only transactions)

- the global log provides a unique form of fault tolerance: even if all stream replicas failed, vCorfu could still service requests by falling back to the log replicas only.

The materialised streams are free of holes thanks to the vCorfu commit protocol, and garbage collection is simplified because clients can issues trim commands directly to stream replicas. This release storage locally, and then issue corresponding trim commands to the global log. (See yesterday’s write-up for a quick refresher on holes and trimming if you need one).

In vCorfu, stream replicas also offer remote views on objects: they can play back all the updates for a particular stream locally and directly service requests to clients.

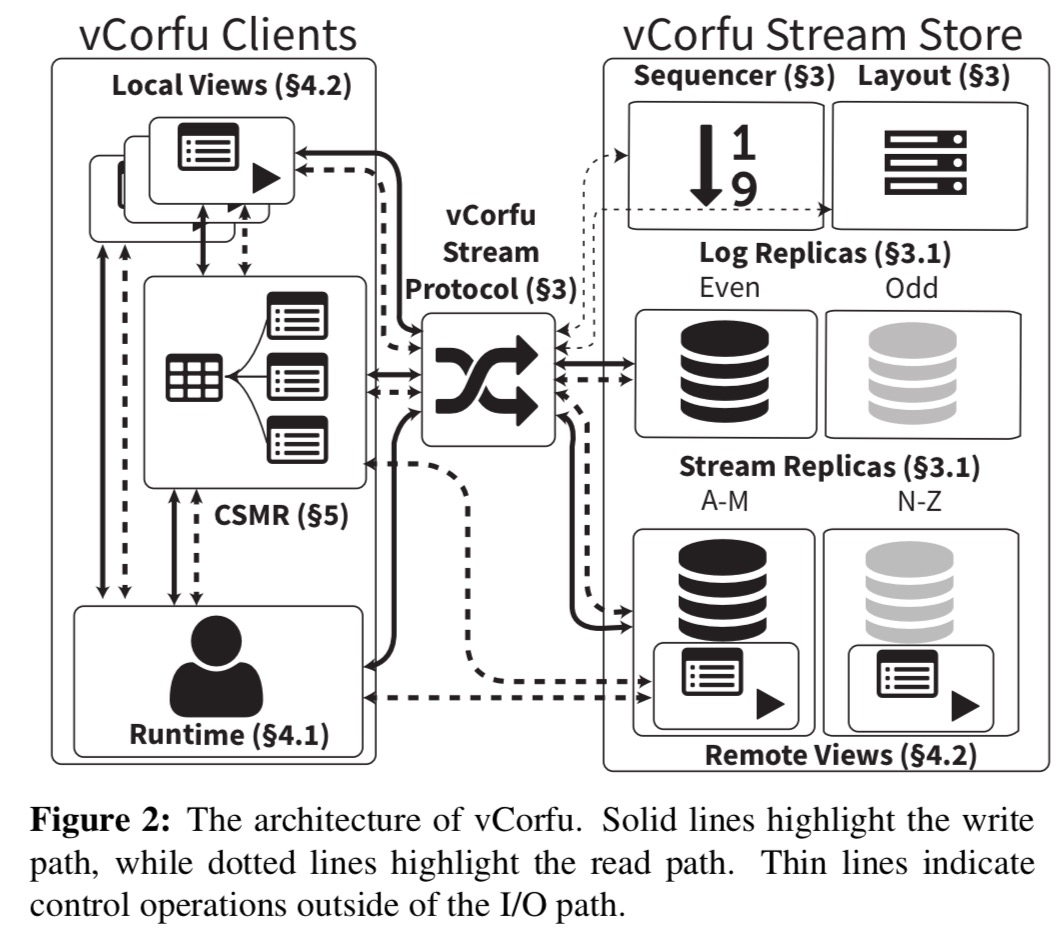

We now know enough to understand the right-hand side of the following vCorfu architecture diagram:

Let’s now take a look at the components on the left-hand side: the vCorfu runtime, local views, and composable state machine replication (CSMR).

The vCorfu object store

To interact with vCorfu as an object store, clients load the vCorfu runtime, a library which manages interactions with the vCorfu stream store. Developers never interact with the store directly, instead, the runtime manipulates the store whenever an object is accessed or modified. The runtime provides each client with a view of objects stored in vCorfu, and these views are synchronized through the vCorfu stream store.

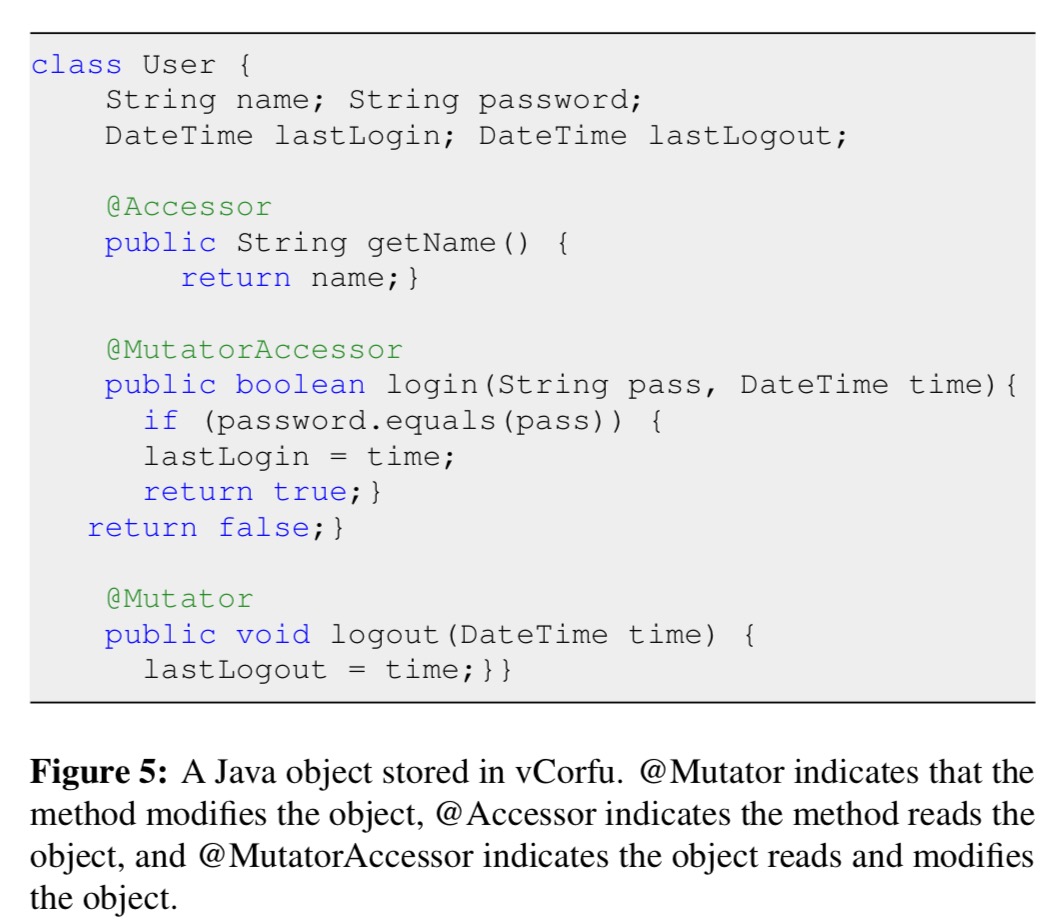

Each object is mapped to a stream that stores its updates. State machine replication is used to provide strongly consistent access to objects. An annotation model helps map Java methods to the log:

@Mutator (and @MutatorAccessor) method calls are serialized and appended to the objects’ stream first before invocation. @Accessor (and @MutatorAccessor) operations cause the runtime to read and apply all updates for the corresponding stream before returning.

In order for SMR to work, each mutator must be determinstic (e.g., a call to

random()ornew Date()is not supported. Many method calls can be easily refactored to take non-deterministic calls as a parameter, as shown in theloginmethod in the example above.

To obtain a view of an object, a client calls open passing in the stream id and specifying whether the view should be local or remote. Local views construct the object state in local memory and are useful for frequently accessed objects.

Transactions execute optimistically against a local snapshot with buffered writes. On transaction completion, if there were no writes then we have a read-only transaction and all is well. If there were writes, the the client informs the sequencer of the log token it obtained at the start of the transaction, and the streams impacted by writes.

If the streams have not changed, the sequencer issues log and stream tokens to the client, which commits the transaction by writing the write buffer. Otherwise, the sequencer issues no token and the transaction is aborted by the client without writing an entry into the log. This important optimization ensures only committed entries are written, so that when a client encounters a transactional commit entry, it may treat it as any other update.

vCorfu provides read-your-writes consistency and strict serializability together with the opacity guarantee that even non-committed transactions are prevented from seeing inconsistent states.

Composable state machine replication

vCorfu allows objects to be composed of other objects – for example, a hash-map of hash-maps – using a technique described as composable state machine replication (CSMR).

Composing SMR objects has several important advantages. First, CSMR divides the state of a single object into several smaller objects, which reduces the amount of state stored at each stream. Second, smaller objects reduce contention and false sharing, providing for higher concurrency. Finally, CSMR resembles how data structures are constructed in memory – this allows us to apply standard data structure principles to vCorfu. For example, a B-tree constructed using CSMR would result in structure with O(logn) time complexity for search, insert and delete operations.

Operations on composite objects which touch multiple child objects need to be marked with @TransactionalMethod which tells the vCorfu runtime to use transactions to make sure objects are modified atomically and read from a consistent snapshot. See section 5 in the paper for more details.

Evaluation

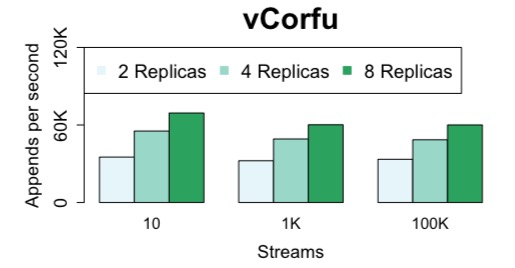

vCorfu is evaluated on a cluster of sixteen 12-core machines. Here we see the fairly small penalty on writes from vCorfu’s materialized streams:

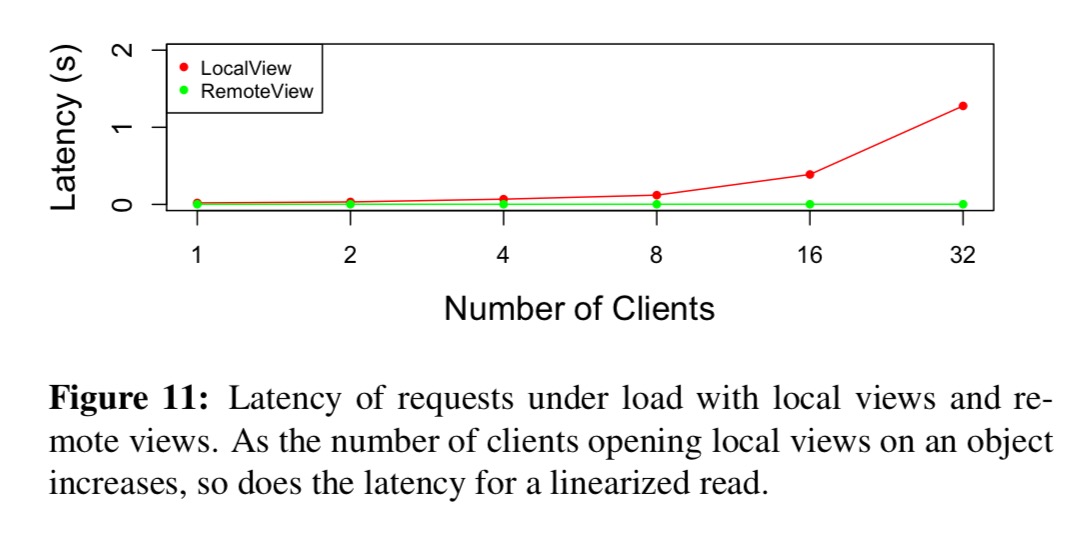

The benefit of supporting remote views is illustrated in the following test:

Here we see an increasing number of clients each appending to their own local views. As the number of views increases, throughput decreases as each client needs to playback the stream and read the updates from all other clients. With 32 clients, read latency is over 1 second. Using a remove view, 1K clients can be supported with millisecond latency.

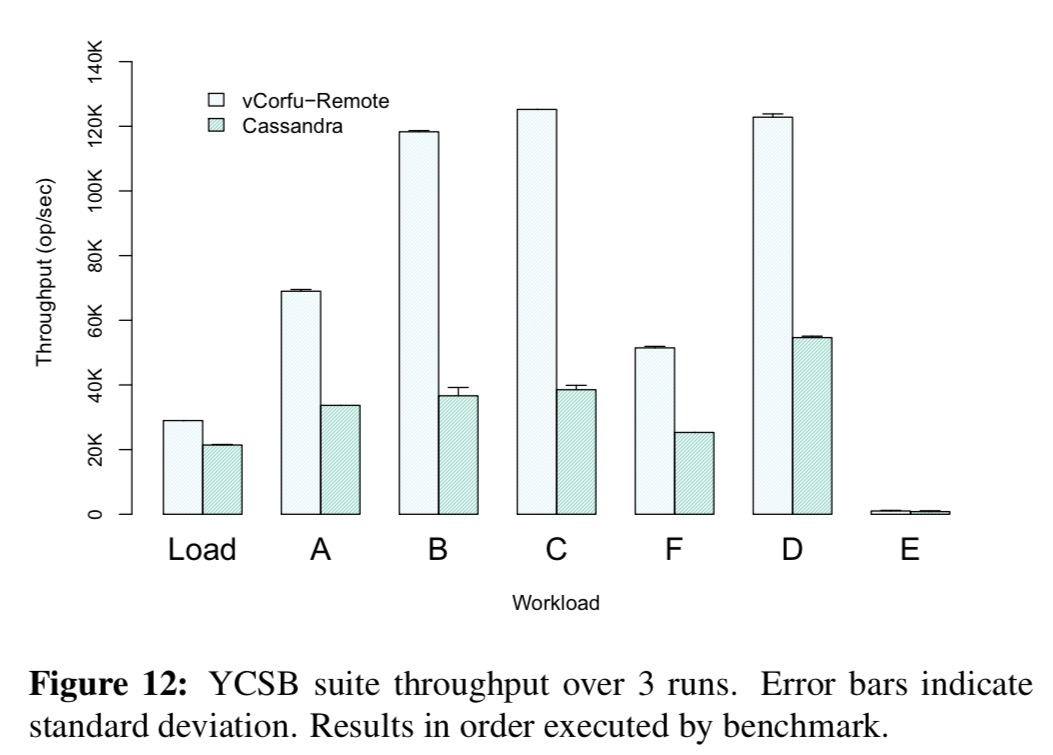

A key-value store is implemented in vCorfu using the CSMR technique and compared against Cassandra on the YCSB benchmark:

vCorfu exhibits comparable write performance to Cassandra – showing that the overhead of the sequencer is low, since both Cassandra and vCorfu must write to two replicas synchronously. However, for reads, Cassandra must read from both replicas in order to not return stale updates, while vCorfu can service the read from the log replica. This leads to significant performance degradation for Cassandra on most of the read-dominated workloads in YCSB. In fact, even with an extra read, Cassandra does not provide the same consistency guarantees as vCorfu as cross-partition reads in Cassandra can still be inconsistent.

See section 6 in the full paper for more details and additional evaluation studies. 5

2 thoughts on “vCorfu: A cloud-scale object store on a shared log”

Comments are closed.