The Ring of Gyges: investigating the future of criminal smart contracts Juels et al, CCS’16

The authors of this paper wrote it out of a concern for the potential abuse of smart contracts for criminal activities. And it does indeed demonstrate a number of ways smart contracts could facilitate crime. It’s also though, another good case study in considerations when designing smart contracts. After all, the stakes are rather high – it’s not just financial considerations but also personal liberty at risk if caught – and the participants certainly may not be trustworthy.

In this paper, we explore the risk of smart contracts fueling new criminal ecosystems. Specifically, we show how what we call criminal smart contracts (CSCs) can facilitate leakage of confidential information, theft of cryptographic keys, and various real-world crimes (murder, arson, terrorism).

Core to the design of the smart contracts (CSCs) is a property the authors call commission-fairness. Commission fairness says that we must guarantee both the commission of a crime and commensurate payment for the perpetrator, or neither. Of course, the fairness issue also applies to many smart contracts in which we want to guarantee either the promised outcome plus payment, or neither. What looks like a fair exchange on the surface can often hide mechanisms that allow a party to a CSC to cheat.

Correct construction of CSCs can thus be quite delicate… As our constructions for commission-fair CSCs show, constructing CSCs is not as straightforward as it might seem, but new cryptographic techniques and new approaches to smart contract design can render them feasible and even practical. … While our focus is on preventing evil, happily the techniques we propose can also be used to create beneficial contracts.

What makes smart contracts interesting for criminal activity?

Decentralized smart contracts have a number of advantages that can benefit criminals:

- They enable fair exchange between mutually distrustful parties based on contract rules, removing the need for physical rendezvous, third-party intermediaries, and reputation systems.

- The minimal interaction makes it harder for law enforcement – a criminal can set up a contract and walk away allowing it to execute autonomously with no further interaction.

- The ability to introduce external state into transactions through an oracle service (e.g., Oraclize) broadens the scope of possible CSCs to include calling-card based physical crimes.

Even before smart contracts, just the pseudonymity offered by cryptocurrencies has been happily embraced by criminals, so there’s every reason to think the same path might be taken with smart contracts.

A major cryptovirological “improvement” to ransomware has been use of Bitcoin, thanks to which CryptoLocker ransomware has purportedly netted hundreds of millions of dollars in ransom.

There are of course questions concerning just how good the anonymity provided by Bitcoin really is, but there are also schemes being developed that improve on this situation. And as the authors point out, “Ron and Shamir provide evidence that the FBI failed to locate most of the Bitcoin holdings of Dread Pirate Roberts (Ross Ulbricht), the operator of the Silk Road, even after seizing his laptop.”

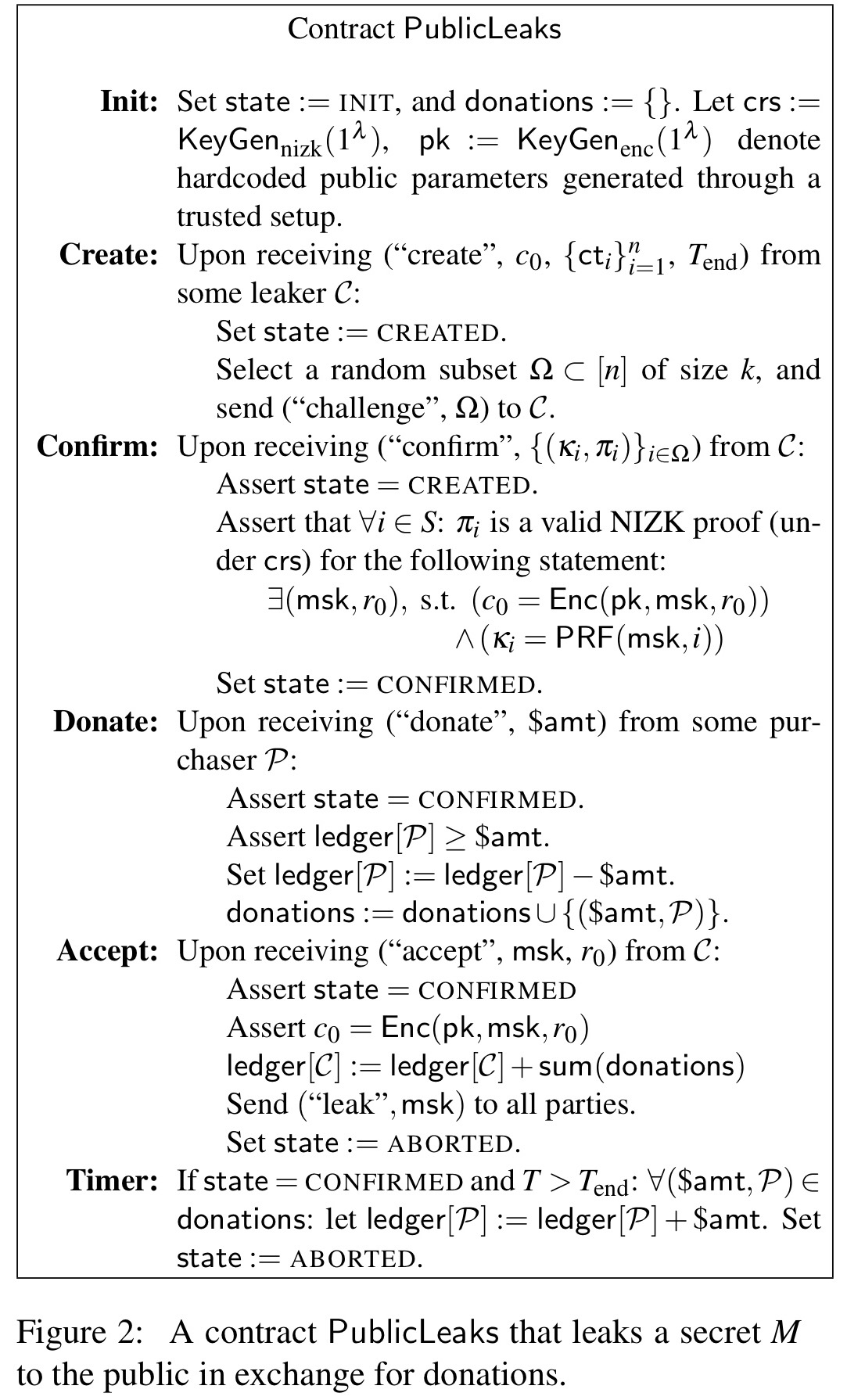

Leaking secrets

The first example scenario is payment-incentivised leakage of information. For example, I have access to pre-release Hollywood movies, trade secrets, government secrets, proprietary source code, industrial designs and so on. If you pay me, I’ll reveal the information (in the basic design, to the world, and then in a revision just to the payer).

Darkleaks is such a system built on top of Bitcoin, but it has some limitations and can only serve to leak secrets publicly, it has no facility for fair exchange in a private leakage scenario. With smart contracts, we can do much better…

Some challenges we need to overcome in the design of the contract include:

- How the seller (contractor,

) proves they have the information

- How to prevent the seller from delaying release of the information (and collecting their payment at the same time) until after the value of the information has reduced or expired (e.g., a film is publicly released).

- How to prevent the seller from selectively withholding parts of the information

Take a manuscript and partition it into

segments,

. Consider first the scenario where

wants to sell it for public release. The seller randomly generates a master secret key, msk, and provides a cryptographic commitment

of the master secret key to the contract. (pk is the public key of a Bitcoin account for receiving payment).

- The seller now generates unique encryption keys for each segment

where PRF is a Pseudo-Random Function, and encrypts each segment using the appropriate key. The encrypted segments can be placed in some public location (to avoid storing them in the contract), and their hashes sent to the contract. Interested purchasers / donors can thus download the segments, but can’t yet decrypt them. The contract is initialised with the initial commitment

, the segment hashes, and an end time

. The contract is now in the CREATED state.

- To ensure potential purchasers can trust the leaker, the contract returns a challenge set

, a random subset of the segments. In a response to the challenge the leaker invokes the confirm function providing the set of keys

to the contract as well as zero-knowledge proofs that the keys were correctly generated from the master secret key encrypted as

. Once the contract has verified these proofs, the contract moves to the CONFIRMED state.

- Potential purchasers / donors can now use the revealed secret keys to decrypt the corresponding segments. If they like what they see, they can pledge money to be paid to the leaker on release of the material.

- At any time, if the set of pledged donations is satisfactory for the leaker, the leaker can call the accept function decommiting the master secret key. The contract verifies the mks, and if this is successful all donated money is paid to the leaker.

- If the end time has elapsed and donations have not been accepted by the leaker, donors can claim their funds back.

To support private leakage (see appendix F.3!) the leaker does not reveal the master secret key directly, but instead provides a ciphertext to the contract for a purchaser

, along with a proof that

is correctly formed.

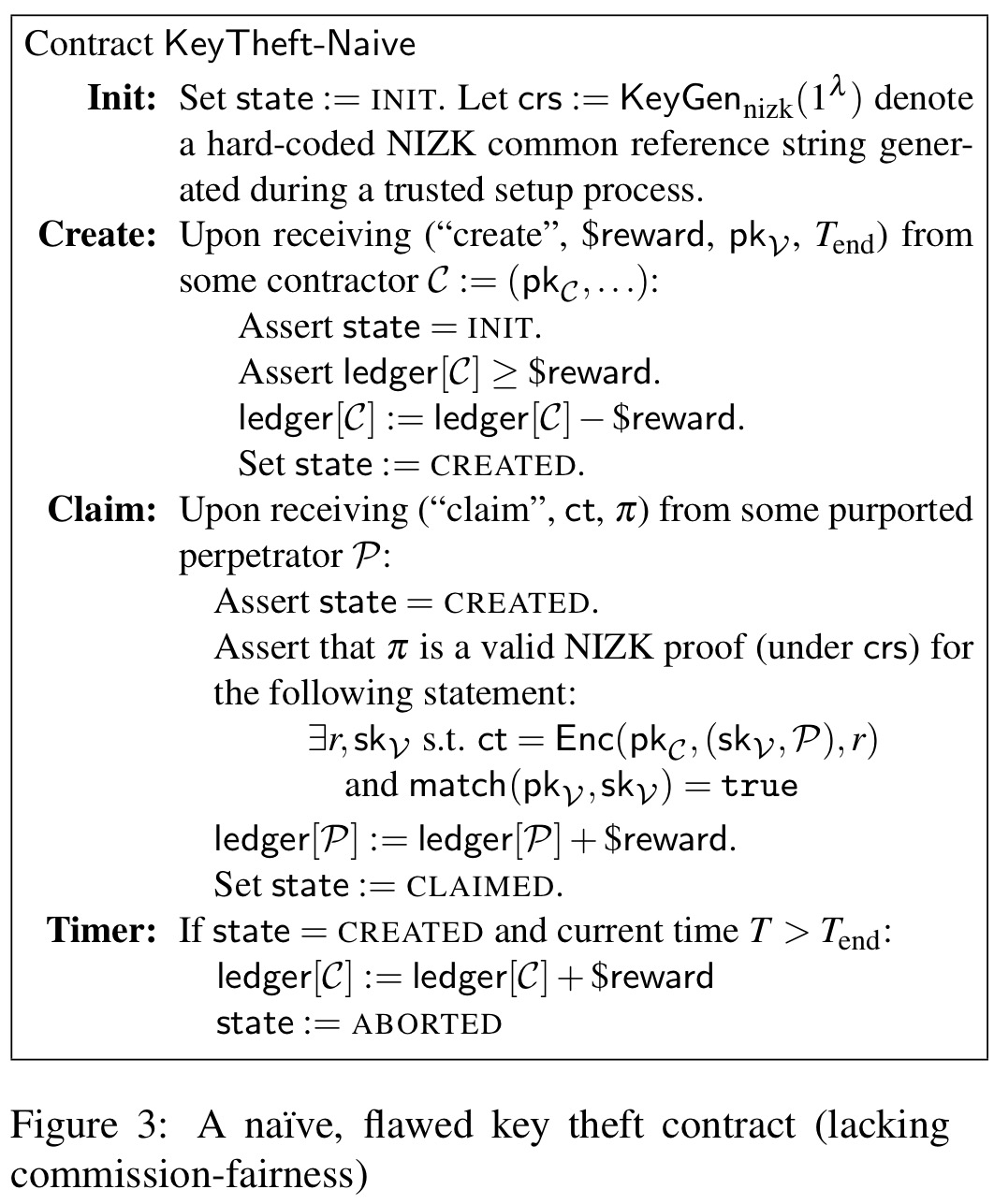

Buying stolen private keys

The second scenario is a contract offering a reward for provision of a private key (e.g., of a victim certificate authority). I’ll go over this one more briefly. An initial attempt at the contract might look something like this:

There are two weaknesses in the above protocol though: it is vulnerable to revoke-and-claim attacks and rushing attacks.

In a revoke-and-claim attack the target CA can revoke its own key and then submit the (now useless) key for payment. So the target of the attack actually ends up profiting from it! The contract is not commission fair in the sense that it doesn’t currently promise to deliver a usable private key.

In a rushing attack when a perpetrator submits a claim, the contractor can learn the private key and then submit their own claim using it. If the contractor can influence ordering (e.g., by attacking the networking layer) they can make their own claim arrive before the valid one.

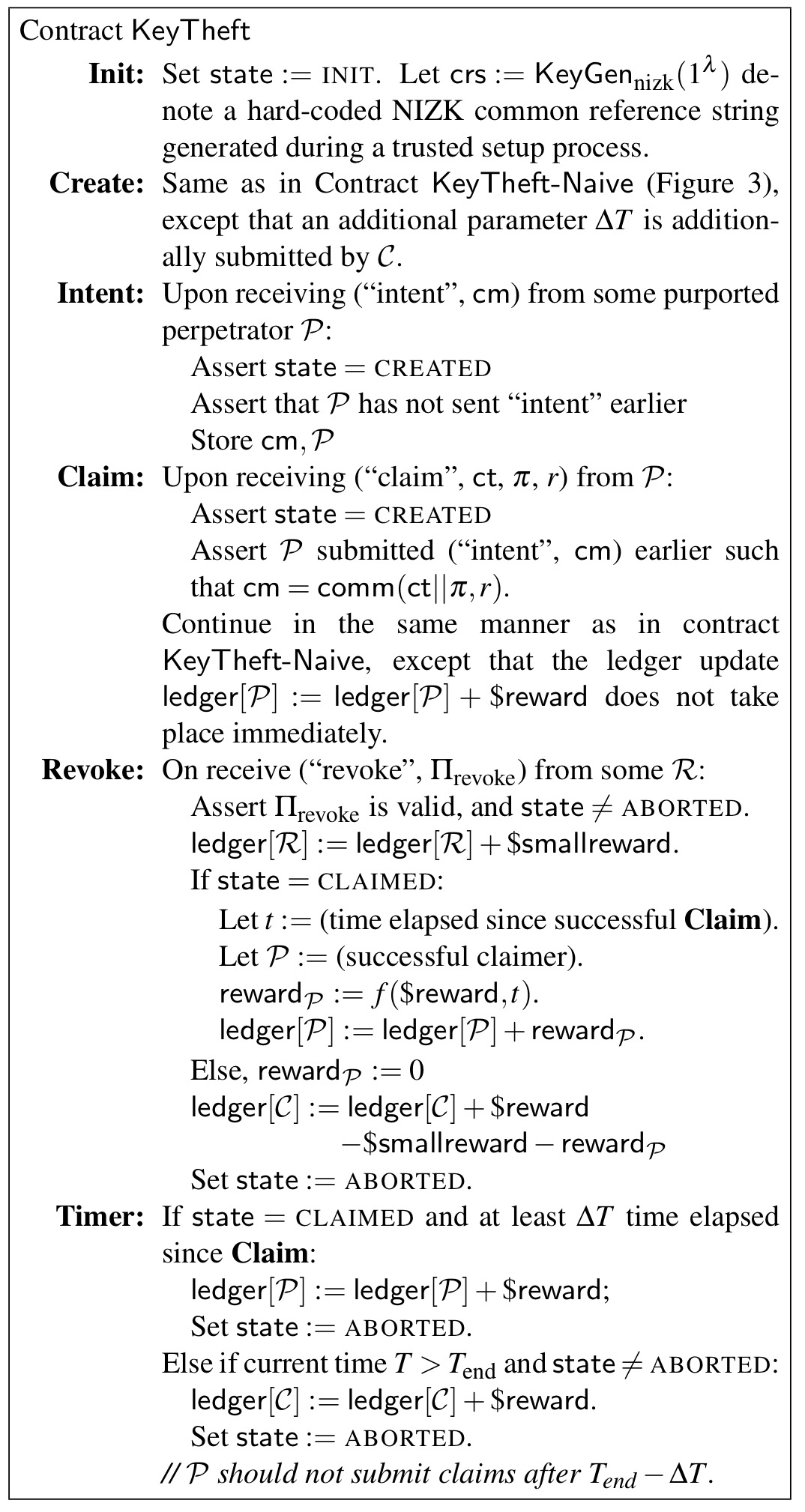

To defend against revoke and claim attacks, a reward truncation feature is added to the contract. This enables the contract to accept evidence of a key revocation at any point in time. This could be submitted by the contractor, or the contractor could even provide a small reward to a third-party submitter. The payout in the contract for provision of a private key then becomes a function of how long the key remains valid for. It still seems to me that a revoke-and-claim attacker that revokes a key and immediately submits a claim still has a good chance of avoiding the detection window before their claim is processed.

To defend against rushing attacks the claim is separated into two phases. In the first phase a perpetrator expresses an intent to claim by submitting a commitment of the real claim message. Not explicitly stated (that I could see), but I presume this state in the contract ‘locks’ the reward for submitter. In the next round (once the submitter can see that their commitment is accepted) they can open the commitment revealing the claim message, and receive payment.

Here’s the extended key theft contract spec:

The paper also discusses variants where the contract targets multiple victims at once, and where false cover claims can be used to leave victims unsure whether or not a successful claim has been committed.

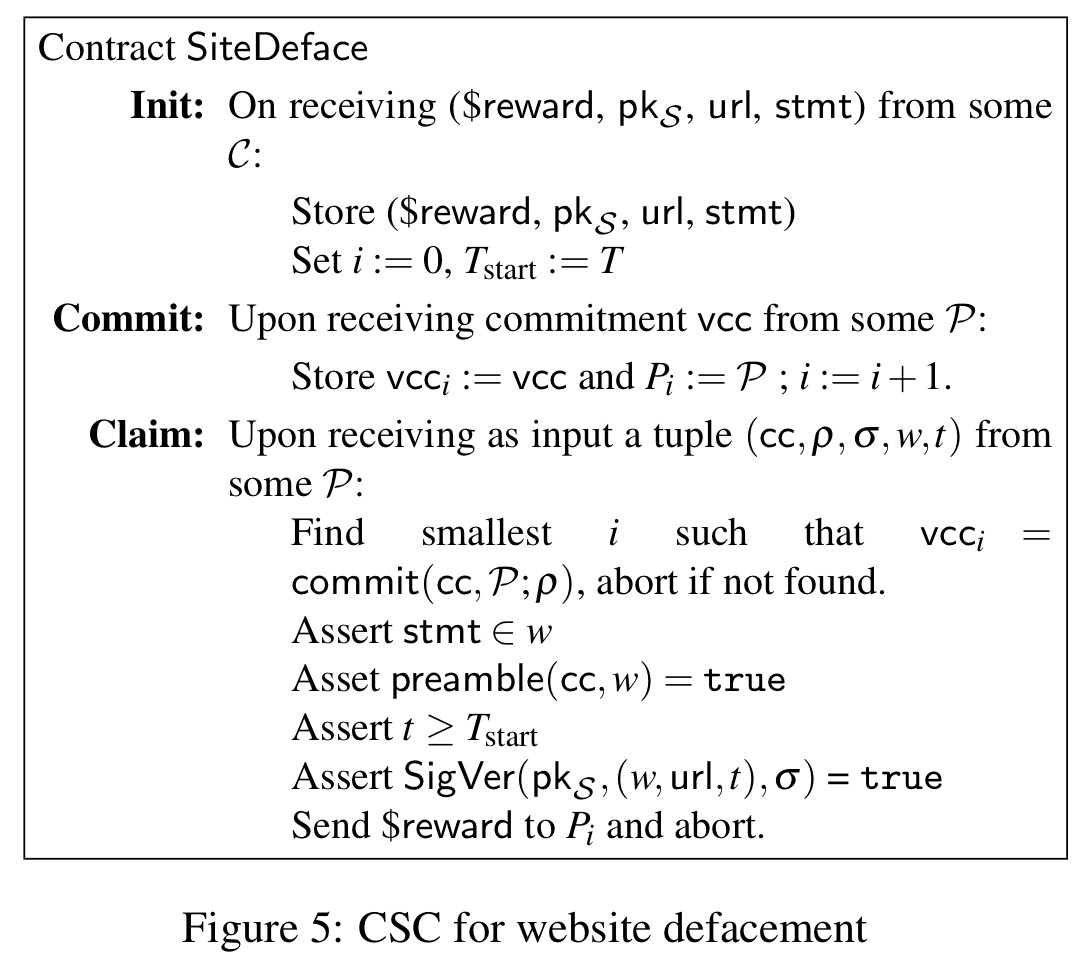

Calling card crimes

A calling card is an unpredictable feature of a to-be-committed crime – for example, a day, time, and place. Given a contract for an assassination for example, an assassin can specify a day, time, and place. If the victim is indeed killed at that time and location, then the contractor can be reasonably sure the assassin is responsible and release payment.

Calling cards, alongside authenticated data feeds, can support a general framework for a wide variety of CSCs.

As a example, consider a contract for web site defacement. The contractor specifies a website url to be hacked and a statement to be displayed.

We assume a data feed that authenticates website content, i.e.,

, where

is a representation of the webpage content and

is a timestamp, denoted for simplicity in contract time. (For efficiency,

might be a hash of and pointer to the page content.) Such a feed might take the form of, e.g., a digitally signed version of an archive of hacked websites (e.g., zone-h.com).

We can then proceed as follows:

Countermeasures?

As a proposed counter-measure, the authors suggest making smart contracts trustee neutralizable: an authority, quorum of authorities, or some set of system participants would have the power to remove a contract from the blockchain. This would still preserve anonymity for participants. Whether this would be accepted, I’m really not sure.

We emphasize that smart contracts in distributed cryptocurrencies have numerous promising, legitimate applications and that banning smart contracts would be neither sensible nor, in all likelihood, possible. The urgent open question raised by our work is thus how to create safeguards against the most dangerous abuses of such smart contracts while supporting their many powerful, beneficial applications.

Reblogged this on Roundand’s Weblog and commented:

It is impossible to describe Smart Criminal Contracts without a dozen Hollywood thriller plots springing to mind per minute. And, as sure as bitcoins is bitcoins, they’re coming to a cloud near you…