EnclaveDB: A secure database using SGX Priebe et al., IEEE Security & Privacy 2018

This is a really interesting paper (if you’re into this kind of thing I guess!) bringing together the security properties of Intel’s SGX enclaves with the Hekaton SQL Server database engine. The result is a secure database environment with impressive runtime performance. (In the read-mostly TATP benchmarks, overheads are down around 15%, which is amazing for this level of encryption and security). The paper does a great job showing us all of the things that needed to be considered to make EnclaveDB work so well in this environment.

One of my favourite takeaways is that we don’t always have to think of performance and security as trade-offs:

In this paper, we show that the principles behind the design of a high performance database engine are aligned with security. Specifically, in-memory tables and indexes are ideal data structures for securely hosting and querying sensitive data in enclaves.

Motivation and threat model

We host databases in all sorts of untrusted environments, potentially with unknown database administrators, server administrators, OS and hypervisors. How can we guarantee data security and integrity in such a world? Or even how can minimise the attack surface even when we do think we can trust some of these components?

Semantically secure encryption can provide strong and efficient protection for data at rest and in transit, but this is not sufficient because data processing systems decrypt sensitive data in memory during query processing.

With machines with over 1TB memory already commonplace, many OLTP workloads can fit entirely in memory. Good for performance, but bad for security if that’s the one place your data is unprotected.

EnclaveDB assumes an adversary can control everything in the environment bar the code inside enclaves. They can access and tamper with any server-side state in memory, on-disk, or over the network. They can mount replay attacks by shutting down the database and attempting to recover from a stale state, and they can attempt to fork the database and sending requests from different clients to different instances (forking attacks).

Denial of service attacks are out of scope, as are side-channel attacks. “Side channels are a serious concern with trusted hardware, and building efficient side channel protection for high performance systems like EnclaveDB remains an open problem.”

High level design

The starting point for EnclaveDB is Hekaton, the in-memory database engine for SQL Server. Hekaton supports in-memory tables and indices made durable by writing to a persistent log shared with SQL Server. (It would be interesting to see what Hekaton could do with NVM…).Hekaton also supports an efficient form of stored procedures where SQL queries over in-memory tables can be compiled to efficient machine code.

- In-memory tables and indexes are ideal structures for secure hosting in an enclave

- The overheads of software encryption and integrity checking for disk-based tables are eliminated

- Query processing on in-memory data minimises leakage of sensitive information and the number of transitions between the enclave and the host

- Pre-compiled queries reduce the attack surface available to an adversary

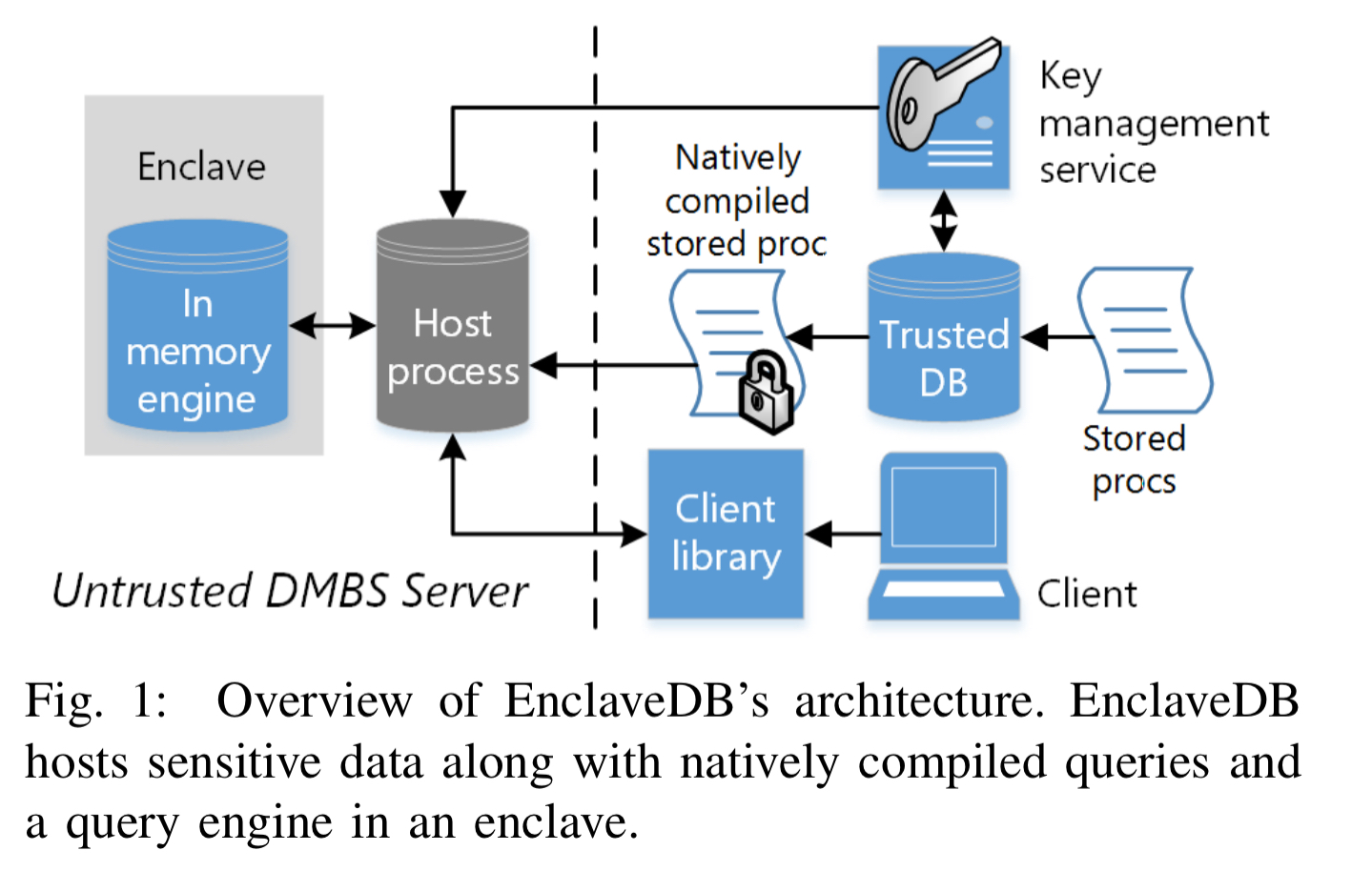

In EnclaveDB an untrusted database server hosts public data, and an enclave hosts sensitive data. The enclave combines a modified Hekaton query engine, natively compiled stored procedures, and a trusted kernel providing the runtime environment for the database engine, and security primitives such as attestation and sealing. Database administration tasks such as backups and troubleshooting are supported by the untrusted server. This is an important separation of concerns in cloud hosting environments where the administrators / operators are not part of the end-user trust model.

As always with enclave-based design, one of the key questions is what goes inside the enclave (larger TCB), and what goes outside (more traffic crossing between secure and insecure environments).

In EnclaveDB, we adopt the principle of least privilege – we introduce a thin layer called the trusted kernel that provides the Hekaton engine with the minimal set of services it requires. The trusted kernel implements some enclave-specific services such as management of enclave memory and enclave threads, and delegates other services such as storage to the host operating system with additional logic for ensuring confidentiality and integrity.

For traditional databases, the entire query processing pipeline is part of the attack surface. With EnclaveDB however, all queries are first compiled to native code and then packaged along with the query engine and the trusted kernel. I.e., they are sealed inside the enclave and can’t be tampered with. This does mean you can only run the set of queries built into the database, and currently implies that any change in schema requires taking the database offline and redeploying the package. Online schema changes via a trusted loader are deferred to future work.

Security considerations

Whereas existing system require users to associate and manage encryption keys for each column containing sensitive data, EnclaveDB takes advantage of the encryption and integrity protection provided by the SGX memory encryption engine, which kicks in whenever data is evicted from the processor cache. Thus only a single database encryption key is needed. This key is provisioned to a trusted key management service (KMS) along with a policy specifying the enclave that the key can be provisioned to. When an EnclaveDB instance starts it remotely attests with the KMS and receives the key.

Clients connect to EnclaveDB by creating a secure channel with the enclave and establishing a shared session key. The enclave authenticates clients using embedded certificates. All traffic between clients and EnclaveDB is encrypted using the session key.

Once client requests have been validated, the stored procedure executes entirely within the enclave, on tables hosted in the enclave. Return values are encrypted before being written to buffers allocated by the host.

Care must be taken with error conditions, which may reveal sensitive information (think e.g. database integrity constraints such as uniqueness). EnclaveDB translates ‘secret dependent’ errors into a generic error, and then packages the actual error code and message into a single message that is encrypted and delivered to the client. Care must also be taken with profiling and statistics, some of which are sensitive because they reveal properties of sensitive data. EnclaveDB maintains all profiling information in enclave memory for this reason. There is an API to export it in encrypted form, from where it can be imported into a trusted client database for analysis.

This takes care of the in-memory (in-enclave) parts of EnclaveDB. But there still remains the important question of the logs, which are kept on-disk and outside of the enclave…

Logging and recovery

Since the host cannot be trusted, EnclaveDB must ensure that a malicious host cannot observe or tamper with the contents of the log.

Simply using an encrypted file system leads to high overheads in a database system where the logs can have highly concurrent and write-intensive workloads, with writes concentrated at the tail of the log. This plays badly with Merkle trees. So the authors introduce a new protocol for checking log integrity and freshness.

Encryption is based on an AEAD scheme (Authenticated Encryption with Associated Data), which writes data with associated authenticated data alongside it. (The implementation uses AES-GCM). A cryptographic hash is maintained for each log data and delta file, and these hashes are verified during recovery. A state-continuity protocol based on Ariadne is used to save and restore the system table within the root file while guaranteeing integrity, freshness, and liveness.

The protocol depends on monotonic counters. The SGX built-in counters are too slow to meet the latency and throughput requirements of EnclaveDB, so EnclaveDB uses a dedicated monotonic counter service implemented using replicated enclaves.

EnclaveDB also carefully tracks log records to identify those that must exist in the log on recovery. Full details of the logging and recovery system, including a proof sketch, can be found in section V of the paper. That’s worthy of a detailed study in it’s own right.

Evaluation

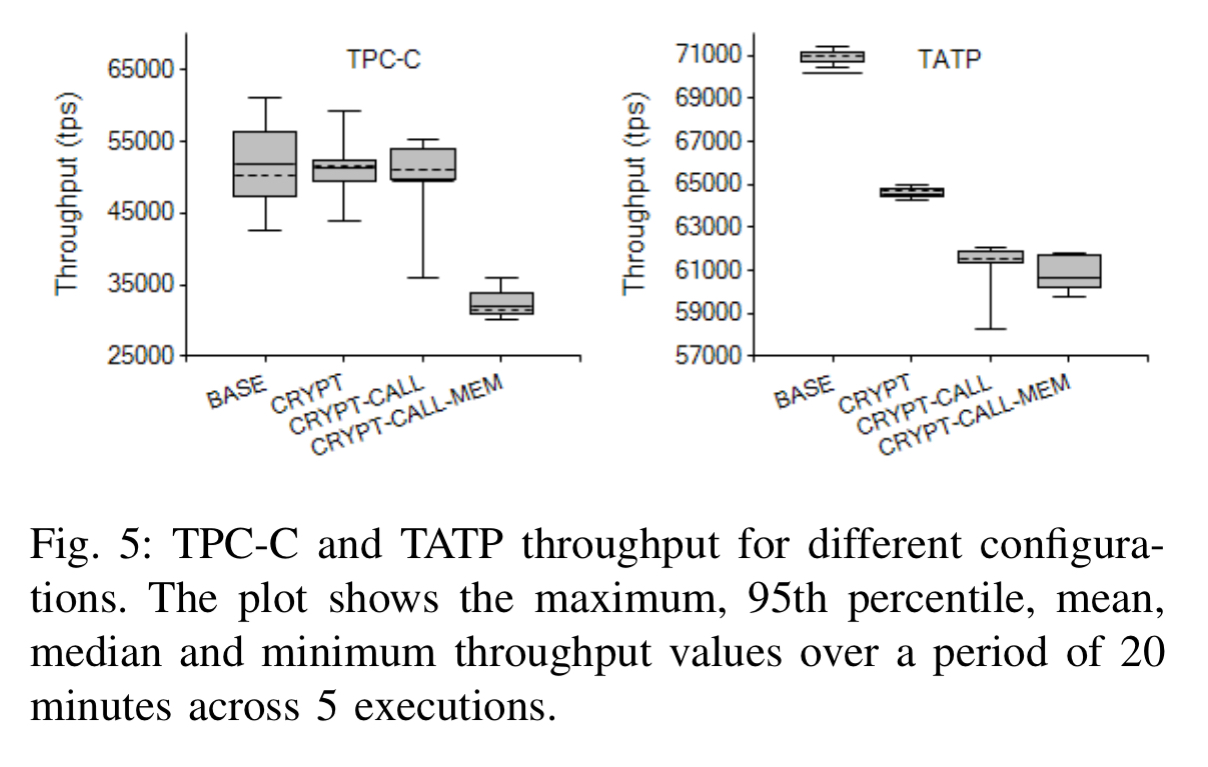

Evaluation is a little tricky since the current generation of Intel Skylake CPUs top out at 128MB for the Enclave Page Cache. So small databases can be tested for real, and the results for larger database sizes are obtained from a carefully calibrated performance model. The authors run both TPC-C and TATP benchmarks (TATP simulates a typical location register database as used by mobile carries to store subscriber information).

The TCB (trusted computing base) for both of these benchmarks (remember all the queries are pre-compiled and included) comes in at around 360Kloc (vs. e.g. 10Mloc for the SQL server OLTP engine).

In the charts that follow, the most interesting comparison is between BASE (Hekaton running outside enclaves) and CRYPT-CALL-MEM which includes all the enclave and encryption overheads.

For TPC-C there’s a drop in throughput of around 40% compared to the BASEline (that’s still about 2 orders of magnitude better than prior work). For TATP the throughput overhead is around 15%, with latency increased by around 22%.

Based on these experiments, we can conclude that EnclaveDB achieves a very desirable combination of strong security (confidentiality and integrity) and high performance, a combination we believe should be acceptable to most users.

What next?

There are many ways EnclaveDB can be improved, such as support for online schema changes, dynamically changing the set of authorized users, and further reducing the TCB. But we believe that EnclaveDB lays a strong foundation for the next generation of secure databases. (Emphasis mine)

8 thoughts on “EnclaveDB: a secure database using SGX”

Comments are closed.