Experimental Security Analysis of a Modern Automobile – Foster et al . 2010

Today’s paper gives us a frightening insight into the (lack of) security of the distributed computing systems controlling modern cars. The results described were obtained from testing a 2009 model year car. Surely today’s cars are better than this? In the UK, today’s cars it turns out are almost exactly this: the average age of a car on the UK roads is 7.8 years. In the US, the average car on the road is even older at 11.5 years. Of course, it’s possible that the software on those cars could have been updated back at dealerships in the intervening years – but that wouldn’t address all of the issues in the paper, and evidence suggests plenty of vulnerabilities still exist.

I am the Cavalry is a grassroots organisation focused on issues where computer security intersects with public safety and human rights. One of their major focus areas is automotive security. Their car-hacking news timeline shows that vulnerabilities in car computer systems remains very much an issue. Just over a year ago (August 2014), I am the Cavalry published an open letter to automotive manufacturers urging them to address some of these issues and setting out a five-star program for automotive security . Last month an interview with Josh Corman in Forbes demonstrated that automotive manufacturers still have a long way to go to meet these standards.

While today’s paper is focused on defenses against attackers, just as alarming to me are the risks imposed by poor software development practices. We all know how hard it is to build distributed systems with the desired safety and liveness properties. What hope do you have of achieving that if you start out with poor software development processes and code that ends up with over ten thousand global variables. Consider the evidence that came to light from the ‘Unintended Acceleration’ lawsuit against Toyota:

After reviewing Toyota’s software engineering process and the source code for the 2005 Toyota Camry, both [of the expert witnesses] concluded that the system was defective and dangerous, riddled with bugs and gaps in its failsafes that led to the root cause of the crash.

and…

… myriad problems with Toyota’s software development process and its source code – possible bit flips, task deaths that would disable the failsafes, memory corruption, single-point failures, inadequate protections against stack overflow and buffer overflow, single-fault containment regions, thousands of global variables. The list of deficiencies in process and product was lengthy.

The entire piece is damning and well worth a read. Follow the links through to the preliminary drafts of the expert testimony to get the full picture.

Anyway, enough with the ranting, let’s take a look at the paper…

Today’s automobile is no mere mechanical device, but contains a myriad of computers. These computers coordinate and monitor sensors, components, the driver, and the passengers. Indeed, one recent estimate suggests that the typical luxury sedan now contains over 100 MB of binary code spread across 50–70 independent computers—Electronic Control Units (ECUs) in automotive vernacular—in turn communicating over one or more shared internal network buses. While the automotive industry has always considered safety a critical engineering concern (indeed, much of this new software has been introduced specifically to increase safety, e.g., Anti-lock Brake Systems) it is not clear whether vehicle manufacturers have anticipated in their designs the possibility of an adversary… We have endeavored to comprehensively assess how much resilience a conventional automobile has against a digital attack mounted against its internal components. Our findings suggest that, unfortunately, the answer is “little.”

Many features of a car require coordination across ECUs, and the industry has standardized on a small number of digital buses such as Controller Area Network (CAN). In fact, the typical car normally contains multiple buses covering different component groups. For example, you may have a high-speed bus for things that need real-time telemetry, and a separate low-speed bus for things like lights and doors.

While it seems that such buses could be physically isolated (e.g., safety critical systems on one, entertainment on the other), in practice they are “bridged” to support subtle interaction requirements. For example, consider a car’s Central Locking Systems (CLS), which controls the power door locking mechanism. Clearly this system must monitor the physical door lock switches, wireless input from any remote key fob (for keyless entry), and remote telematics commands to open the doors. However, unintuitively, the CLS must also be interconnected with safety critical systems such as crash detection to ensure that car locks are disengaged after airbags are deployed to facilitate exit or rescue.

There are also telematics systems that link the car over cellular networks. In today’s paper, the focus is on what an adversary can do once access to the car’s network is obtained by some means.

While we leave a full analysis of the modern automobile’s attack surface to future research, we briefly describe here the two “kinds” of vectors by which one might gain access to a car’s internal networks. The first is physical access. Someone—such as a mechanic, a valet, a person who rents a car, an ex-friend, a disgruntled family member, or the car owner—can, with even momentary access to the vehicle, insert a malicious component into a car’s internal network via the ubiquitous OBD-II port (typically under the dash). The attacker may leave the malicious component permanently attached to the car’s internal network or, as we show in this paper, they may use a brief period of connectivity to embed the malware within the car’s existing components and then disconnect… The other vector is via the numerous wireless interfaces implemented in the modern automobile. In our car we identified no fewer than five kinds of digital radio interfaces accepting outside input, some over only a short range and others over indefinite distance. While outside the scope of this paper, we wish to be clear that vulnerabilities in such services are not purely theoretical. We have developed the ability to remotely compromise key ECUs in our car via externally-facing vulnerabilities…

The test cars where examined in three settings – in a lab bench with hardware extracted from the vehicle, in a stationary car on jacks, and while driving on the road in a closed aerodrome. To facilitate these experiments, the team wrote CarShark, a custom CAN-bus analyzer and packet injection tool.

The CAN protocol has a number of inherent security weaknesses including physical and logical broadcast of all packets to all nodes, extreme vulnerability to DoS attacks, no authenticator fields (so any component can indistinguishably send any packet to any other component), and weak access control. ECUs issue fixed challenges (seeds) and the corresponding keys are also stored in the ECU. “Many of the seed-to-key algorithms in use today are known by the car tuning community.” Firmware upgrades and open diagnostics via the DeviceControl service both provide easy routes to bypass security.

Given the weaknesses with the CAN access control sequence, the role of “tester” is effectively open to any node on the bus and thus to any attacker. Worse, in Section IV-C below we find that many ECUs in our car deviate from their own protocol standards, making it even easier for an attacker to initiate firmware updates or DeviceControl sequences—without even needing to bypass the challenge-response protocols.

There are several risk-mitigation protocols which a CAN-implementation should comply with. In the car under test, many of these were not upheld:

- ECUs should reject the ‘disable CAN communications’ command when it is unsafe to do so – but in the test car communications could be disabled even while driving on the road.

- ECUs can be reflashed while the car is driving (not supposed to be permitted)

- Non-compliant access control implementations: used the same fixed seed and keys for common to all units with the same part number – and even worse, not using the result of the challenge-response protocol anywhere so that you can for example reflash the unit at any time without authenticating.

- Access to sensitive memory addresses is supposed to be denied, but in the test car reflashing keys and even the entire memory of the telematics unit could be read without authenticating.

- Unsafe DeviceControl override requests (e.g. releasing the brakes) should be rejected – not so in the test car which accepted them without authentication.

- Gateways between the high and low speed networks should only be reprogrammable from the high-speed network. Not true in the test car – the telematics unit is on both networks and can only be reprogrammed from the low-speed network. Thus a low-speed device can attack the high-speed network by bridging through the telematics unit.

Once you’re on the network, what can you actually do? Foster et al. investigate via a combination of packet sniffing, probing, reverse engineering, and fuzzing. The short answer seems to be ‘pretty much anything you can imagine!’ Perhaps most scary is that for many functions, it is possible to completely disable user control – the driver is helpless.

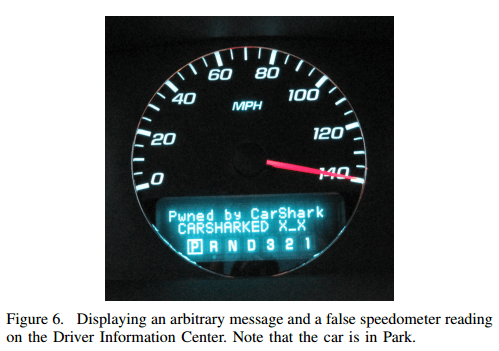

Starting with the radio , “We were able to completely control—and disable user control of—the radio, and to display arbitrary messages. For example, we were able to consistently increase the volume and prevent the user from resetting it,” and the instrument control panel: “We were able to fully control the Instrument Panel Cluster (IPC). We were able to display arbitrary messages, falsify the fuel level and the speedometer reading, adjust the illumination of instruments, and so on,” things progress rapidly from there.

- You can make it impossible to unlock the doors, turn the car off, or to remove the key from the ignition:

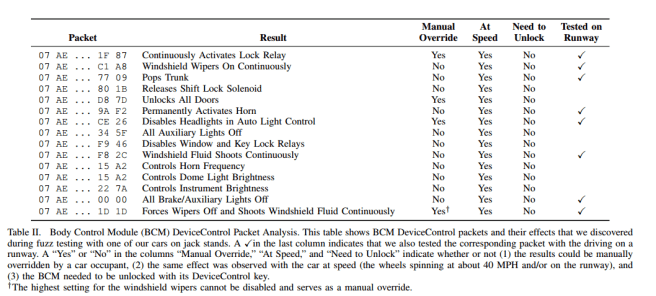

we were able to discover packets to: lock and unlock the doors; jam the door locks by continually activating the lock relay; pop the trunk; adjust interior and exterior lighting levels; honk the horn (indefinitely and at varying frequencies); disable and enable the window relays; disable and enable the windshield wipers; continuously shoot windshield fluid; and disable the key lock relay to lock the key in the ignition…. We were also able to prevent the car from being turned off: while the car was on, we caused the BCM to activate its ignition output. This output is connected in a wired-OR configuration with the ignition switch, so even if the switch is turned to off and the key removed, the car will still run. We can override the key lock solenoid, allowing the key to be removed while the car is in drive, or preventing the key from being removed at all

- Boost engine RPM or disable the engine altogether

- Unlock, lock, and prevent brakes from being engaged:

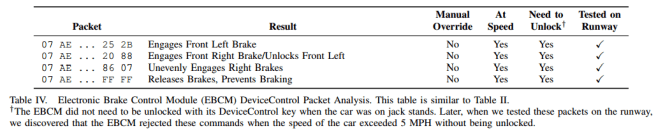

Our fuzzing of the Electronic Brake Control Module (see Table IV) allowed us to discover how to lock individual brakes and sets of brakes, notably without needing to unlock the EBCM with its DeviceControl key. In one case, we sent a random packet which not only engaged the front left brake, but locked it resistant to manual override even through a power cycle and battery removal. To remedy this, we had to resort to continued fuzzing to find a packet that would reverse this effect. Surprisingly, also without needing to unlock the EBCM, we were also able to release the brakes and prevent them from being enabled, even with car’s wheels spinning at 40 MPH while on jack stands.

- Prevent all communication to/from individual CAN components

These effects could all be reproduced while driving the car on the road (under controlled conditions):

Even at speeds of up to 40 MPH on the runway, the attack packets had their intended effect, whether it was honking the horn, killing the engine, preventing the car from restarting, or blasting the heat. Most dramatic were the effects of DeviceControl packets to the Electronic Brake Control Module (EBCM)—the full effect of which we had previously not been able to observe. In particular, we were able to release the brakes and actually prevent our driver from braking; no amount of pressure on the brake pedal was able to activate the brakes. Even though we expected this effect, reversed it quickly, and had a safety mechanism in place, it was still a frightening experience for our driver. With another packet, we were able to instantaneously lock the brakes unevenly; this could have been dangerous at higher speeds. We sent the same packet when the car was stationary (but still on the closed road course), which prevented us from moving it at all even by flooring the accelerator while in first gear.

Combining all the possibilities leads to some highly dangerous composite attacks, for example:

Our analysis in Section V uncovered packets that can disable certain interior and exterior lights on the car. We combined these packets to disable all of the car’s lights when the car is traveling at speeds of 40 MPH or more, which is particularly dangerous when driving in the dark. This includes the headlights, the brake lights, the auxiliary lights, the interior dome light, and the illumination of the instrument panel cluster and other display lights inside the car. This attack requires the lighting control system to be in the “automatic” setting, which is the default setting for most drivers. One can imagine this attack to be extremely dangerous in a situation where a victim is driving at high speeds at night in a dark environment; the driver would not be able to see the the road ahead, nor the speedometer, and people in other cars would not be able to see the victim car’s brake lights.

Finally, it is possible for the attack code to erase all signs of it ever being there once an attack has been completed. The paper contains some very sobering summary tables of the discovered attack vectors. I’ve reproduced a couple below. Note especially the ‘manual override’ (can the user do anything about it), and ‘at speed’ columns. ‘No, Yes’ being the most dangerous combination!

One thought on “Experimental Security Analysis of a Modern Automobile”

Comments are closed.