Combining static model checking with dynamic enforcement using the Statecall Policy Language – Madhavapeddy 2009

We know that getting distributed systems right is hard, and subtle, ‘deep’ bugs can lurk in both algorithms and implementations. Can we do better than informal reasoning coupled with some unit and integration tests? Evidence suggests we have to do better! We’ve previously looked at Amazon’s use of TLA+, and today’s selection is the first of three papers I’ve selected to probe deeper into this issue. Today’s choice looks at the Statecall Policy Language (SPL) that was used by Howard et al. in Raft refloated to validate their Raft implementation. In the next two days we’ll also be looking at distributed model checking made practical with SAMC, and pitting our wits against Molly with Peter Alvaro’s ‘Lineage Driven Fault Injection.’

The Statecall Policy Language (SPL) was designed to make model checking more accessible to regular programmers. Models are specified in terms of allowable sequences of program events, and the SPL model can then be translated by a compiler into a variety of forms. These include:

- A translation into PROMELA, which can then be used with SPIN to check static properties of the model.

- A graphical visualization using GraphViz.

- Generated code in your target implementation language (OCaml backend is currently implemented, but the design allows for others) that enables run-time validation (i.e. ensures that the real-world behaviour is not deviating from the model). Also known as a safety monitor.

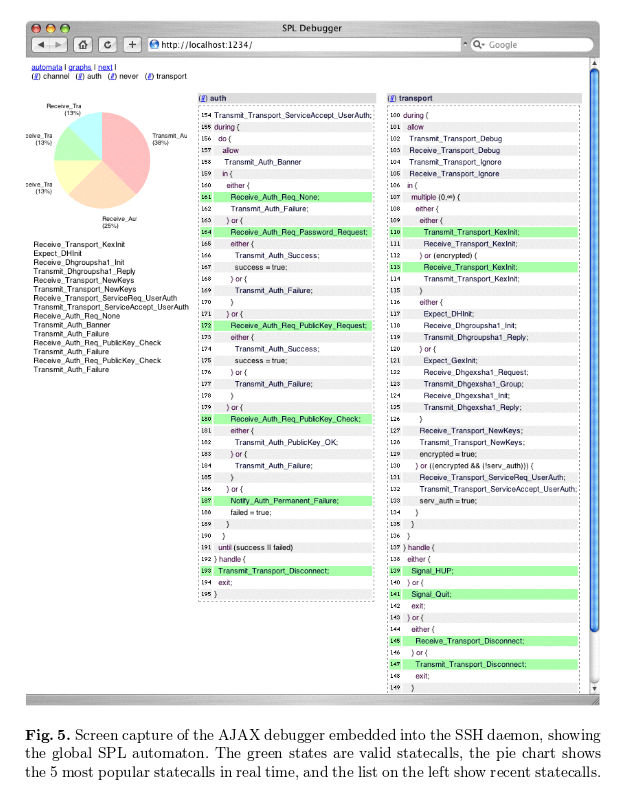

- Debugging stubs to support an HTML and Javascript debugging view giving a real-time window into all the automata embedded in the program.

Writing as of 2009, Madhavapeddy states:

None of the major implementations of protocols such as HTTP (Apache), SMTP (Sendmail/Postfix), or DNS (BIND) are regularly model-checked by their development teams. All of them regularly suffer from serious security flaws ranging from low-level buffer overflows to subtle high-level protocol errors, some of which could have been caught by using model checking.

(We’ll see more examples of verification techniques finding meaningful real-world bugs over the next two days).

Here’s an SPL model for ping that supports the -c n (only send n packets in total) and -w (wait instead of timing out) flags/behaviours.

01 automaton ping (int max_count, int count, bool can_timeout) {

02 Initialize;

03 during {

04 count = 0;

05 do {

06 Transmit_Ping;

07 either {

08 Receive_Ping;

09 } or (can_timeout) {

10 Timeout_Ping;

11 };

12 count = count + 1;

13 } until (count >= max_count);

14 } handle {

15 SIGINFO;

16 Print_Summary;

17 };

18 }

The Statecalls begin with an initial capital letter: Initialize, Transmit_Ping, Receive_Ping, Timeout_Ping, and Print_Summary. The automaton defines the allowable sequences of statecalls.

Signal handlers are often a source of bugs due to their extremely asynchronous nature — SPL provides a during/handle construct (used in the example above, see the lines 03 and 14) which models them by permitting a state transition into alternative statement blocks during normal execution of an SPL specification.

Once you are satisfied with the SPL model you can run the SPL compiler. The generated code for the executable model can be linked with the real ping implementation. You can ‘even do this manually’ if you really want to!

This code is linked in with the main ping application, and appropriate calls to initialize the automaton and invoke statecalls are inserted in the code. Crucially, we do not mandate a single style of invoking statecalls; instead the programmer can choose between automatic mechanisms (e.g. MPL packet parsing code can automatically invoke statecalls when transmitting or receiving packets), language-assisted means (e.g. functional combinators, object inheritance, or pre-processors such as cpp), or even careful manual insertion in places where other methods are inconvenient.

Underneath the covers, SPL translates models into an intermediate form based on a Control Flow Automata (CFA) graph.

For more complex protocols, it is possible to ‘divide-and-conquer’ :

It is often more convenient and readable to break down a complex protocol into smaller blocks which express the same protocol but with certain aspects factored out into simpler state machines. Accordingly, SPL specifications can define multiple automata, but the external interface hides this abstraction and only exposes a single, flat set of statecalls.

Each automaton then executes in parallel, received statecalls are only dispatched to automata which include the statecall in their alphabet.

The debugging support is a nice touch (see screenshot below):

This page contains a real-time graphical view of all the automata embedded in the program, along with the set of valid states they can transition to next. Since the granularity of the SPL automata are chosen by the programmer, this is much more useful than the “raw” models obtained through static code analysis which often include a lot of superfluous information. Figure 5 shows a screen capture of the SPL AJAX debugger single-stepping through the global SPL automaton for theMelange SSH server. The mlssh server is blocked waiting for password authentication, having previously attempted to authenticate via null and public-key authentication. In our experience, the debugger was a valuable tool to debug complex protocol bugs in our implementation, as the single-stepping view via this debugger is significantly higher level than the alternative provided by either the native OCaml debugger or gdb.

2 thoughts on “Combining static model checking with dynamic enforcement using the Statecall Policy Language”

Comments are closed.