JavaScript Zero: Real JavaScript and zero side-channel attacks Schwarz et al., NDSS’18

We’re moving from the server-side back to the client-side today, with a very topical paper looking at defences against micro-architectural and side-channel attacks in browsers. Since submission of the paper to NDSS’18, this subject grew in prominence of course with the announcement of the meltdown and spectre attacks.

Microarchitectural attacks can also be implemented in JavaScript, exploiting properties inherent to the design of the microarchitecture, such as timing differences in memory accesses. Although JavaScript code runs in a sandbox, Oren et al. demonstrated that it is possible to mount cache attacks in JavaScript. Since their work, a series of microarchitectural attacks have been mounted from websites, such as page deduplication attacks, Rowhammer attacks, ASLR bypasses, and DRAM addressing attacks.

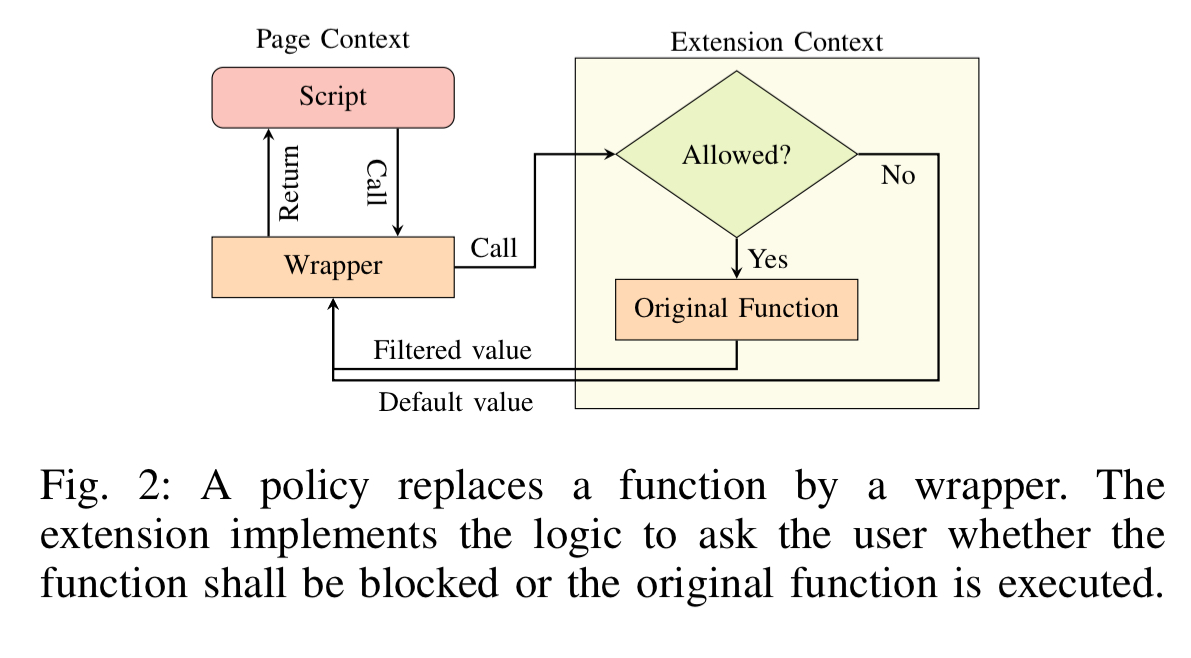

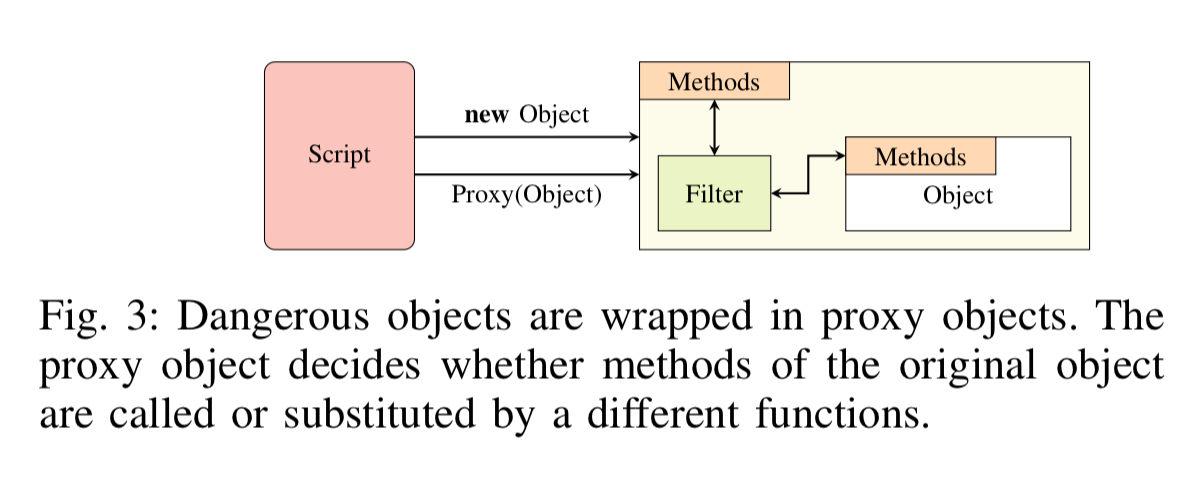

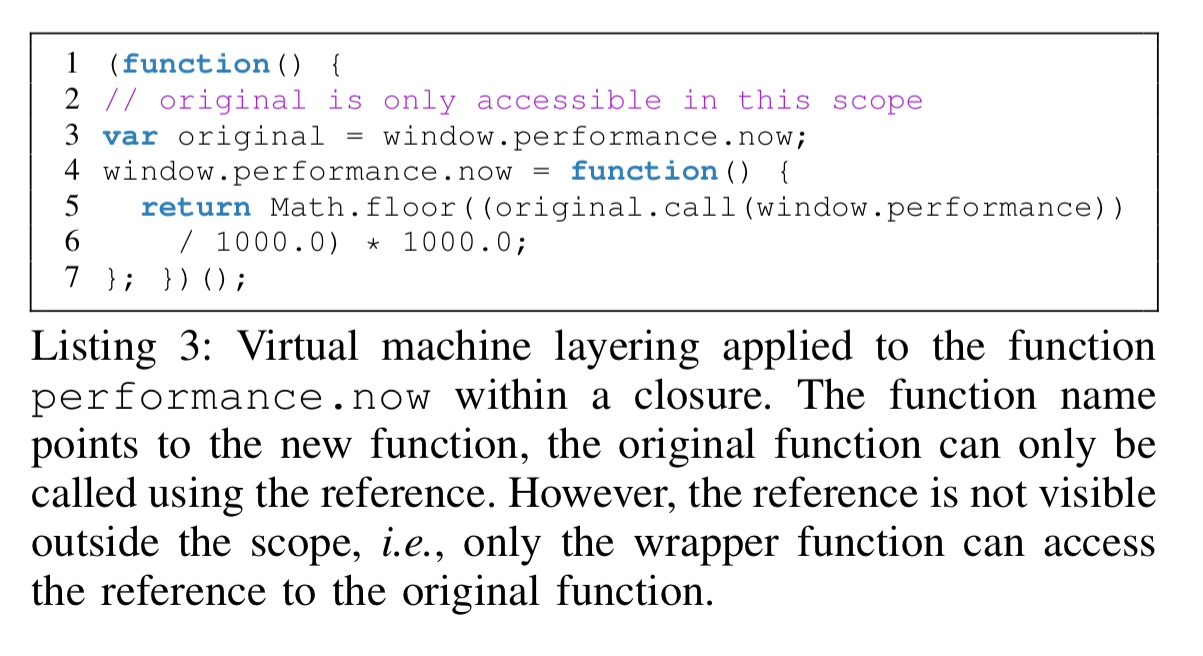

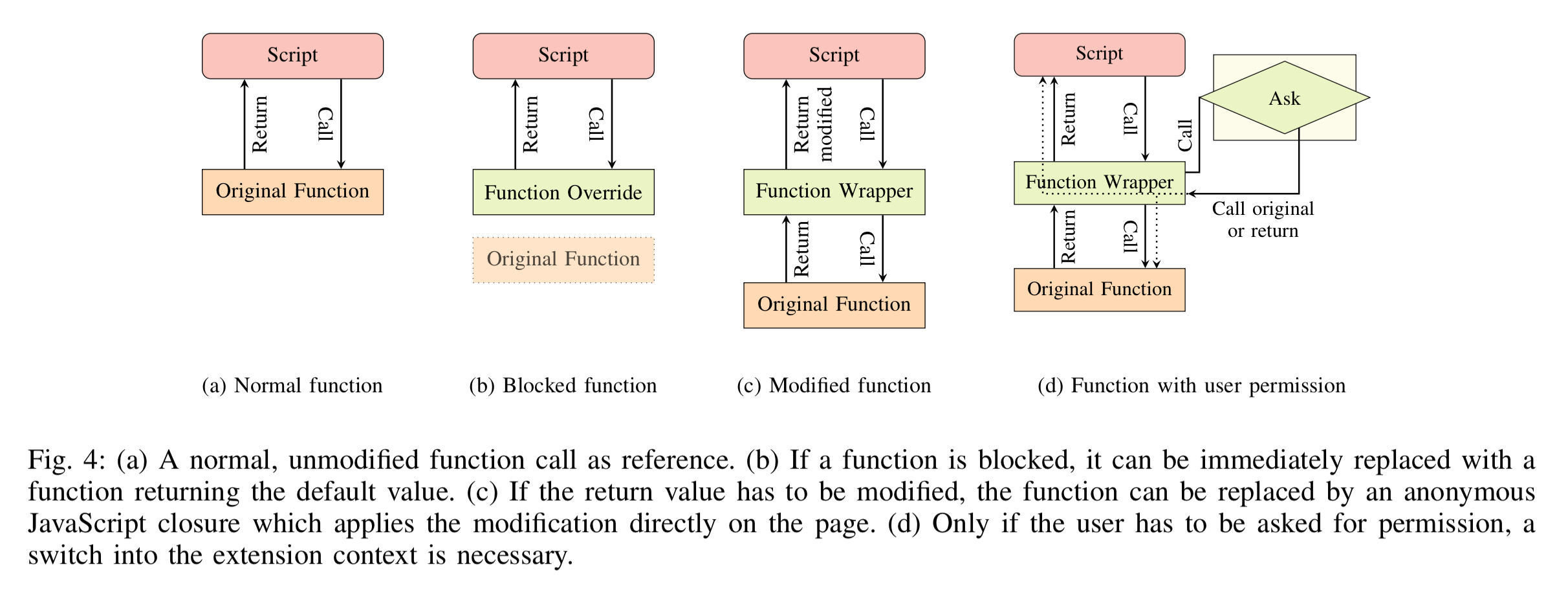

Chrome Zero is a proof of concept implementation that defends against these attacks. It installs as a Chrome extension and protects functions, properties, and objects that can be exploited to construct attacks. The basic idea is very simple, functions are wrapped with replacement versions that allow injection of a policy. This idea of wrapping functions (and properties with accessor properties, and certain objects with proxy objects) goes by the fancy name of virtual machine layering.

Closures are used when wrapping functions to ensure that references to the original function are inaccessible to any code outside of the closure.

Policies determine what the wrappers actually do. There are four possible alternatives:

- Allow (passthrough)

- Block – the function is replaced by a stub that returns a given default value

- Modify – the function is replaced with a policy-defined function, which may still call the original function if required. An example would be reducing the resolution of timers.

- User permission – JavaScript execution is paused and the user is asked for permission to continue executing the script.

(Enlarge)

As the code is continuously optimized by the JIT, our injected functions are compiled to highly efficient native code, with a negligible performance difference compared to the original native functions. The results of our benchmarks show that Chrome Zero does not have a visible impact on the user experience for everyday usage.

With that basic description of the mechanism out of the way, let’s get down to the interesting part: the features in JavaScript that are used in microarchitectural and side-channel attacks, and how Chrome Zero protects them.

The features used to build attacks

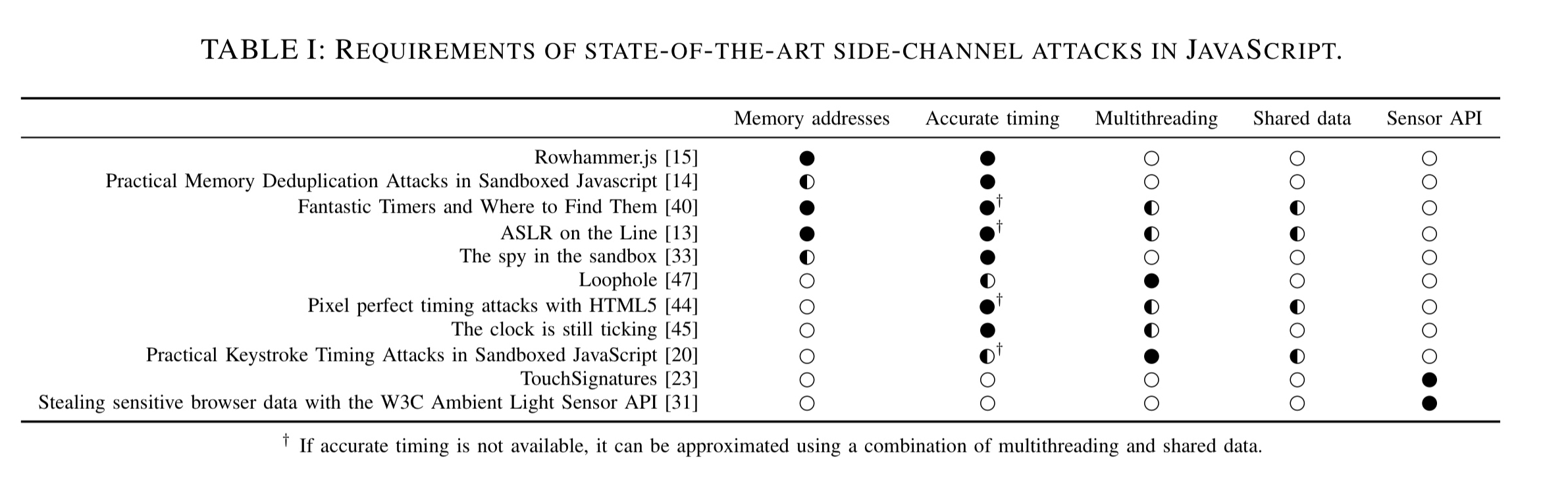

We identified several requirements that are the basis for microarchitectural attacks, i.e., every attack relies on at least one of the primitives. Moreover, sensors found on many mobile devices, as well as modern browsers introduce side-channels which can also be exploited from JavaScript.

The following table provides a nice summary of attacks and the features that they require:

(Enlarge)

Memory addresses

JavaScript never discloses virtual addresses, but ArrayBuffers can be exploited to reconstruct them. Once an attacker has knowledge of virtual addresses, they have effectively defeated address space layout randomization (ASLR). Microarchitectural attacks typically need physical addresses. Since browser engines allocate ArrayBuffers page aligned, the first byte is therefore at the beginning of a new physical page. Iterating over a large array also results in page faults at the beginning of a new page. The increased time to resolve a page fault is higher than a regular memory access and can be detected.

In Chrome Zero, the challenge is to ensure that array buffers are not page-aligned, and that attackers cannot discover the offset of array buffers within the page. Chrome Zero uses four defences here:

- Buffer ASLR – to prevent arrays from being page aligned, the length argument of buffer constructors is implemented to allocated an additional 4KB. The start of the array is then moved to a random offset within this page, and the offset is added to every array access.

- Preloading – iterating through the array after constructing it triggers a page fault for every page, after which an attacker cannot learn anything from iterating since the memory is already mapped.

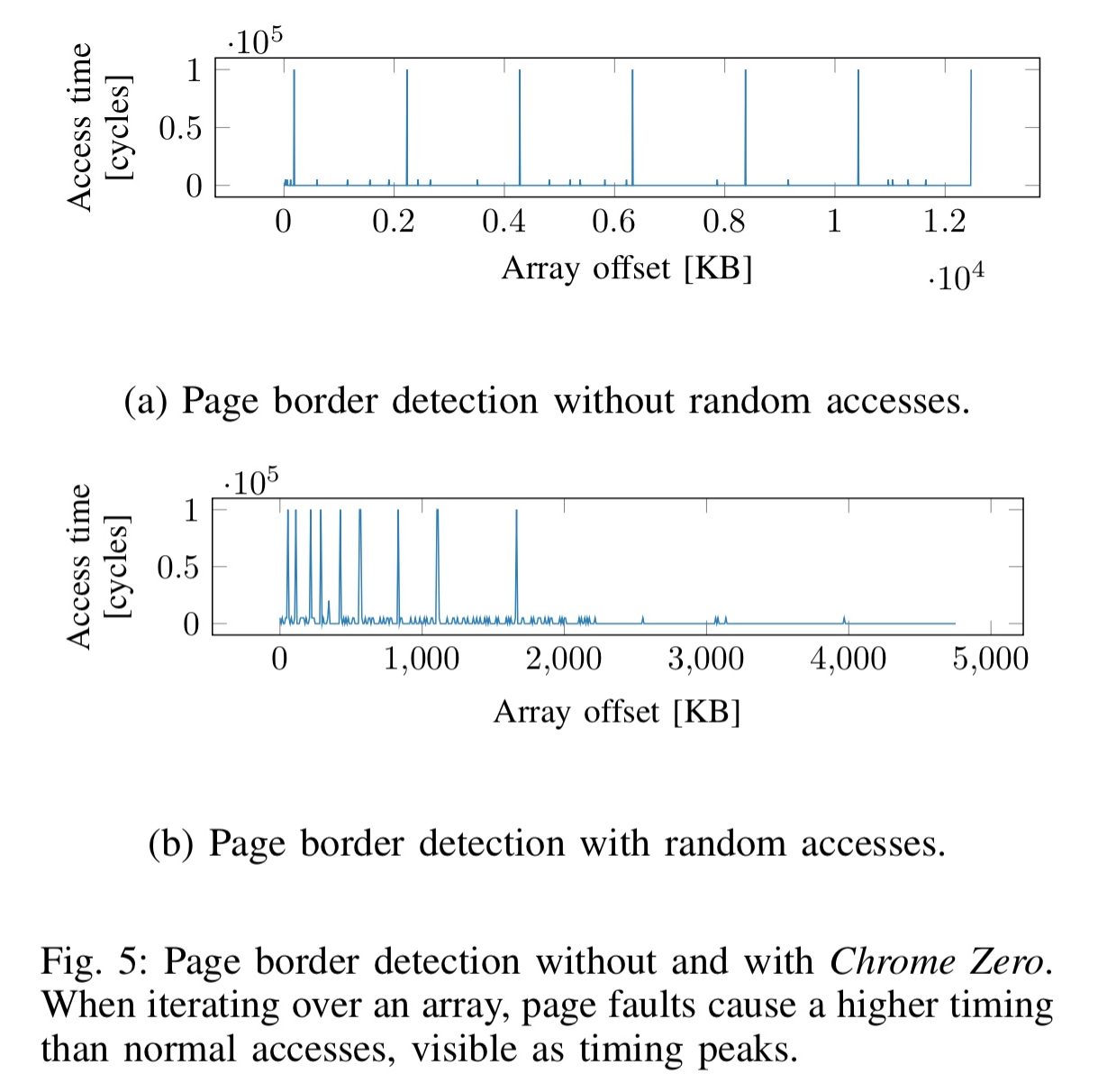

- Non-determinism – as an alternative to pre-loading, array setters can be modified to add a memory access to a random array index for every access of the array. This offers stronger protection than preloading as it prevents an attacker just waiting for pages to be swapped out. With this random access mechanism, an attacker can learn the number of pages, but not where the page borders are (see Fig 5 below).

- Array index randomization – the above three policies cannot thwart page-deduplication attacks. To prevent these attacks, we have to ensure that an attacker cannot deterministically choose the content of an entire page. This can be done by introducing a random linear function mapping array indices to the underlying memory. (Mechanical sympathisers weep at this point 😉 ). An access to array index x is replaced with

, where a and b are randomly chosen and co-prime, and n is the size of the buffer.

Timing information

Accurate timing is one of the most important primitives, inherent to nearly all microarchitectural and side-channel attacks.

JavaScript provides the Date object with resolution of 1 ms, and the Performance object which provides timestamps accurate to a few microseconds. Microarchitectural attacks often require resolution on the order of nanoseconds though. Custom timing primitives, often based on some form of monotonically increment counter, are used as clock replacements.

Chrome Zero implements two timing defences: low-resolution timestamps and fuzzy time.

- For low-resolution timestamps, the result of a high-resolution timestamp is simply rounded to a multiple of 100ms.

- In addition to rounding the timestamp, fuzzy time adds random noise while still guaranteeing that timestamps are monotonically increasing.

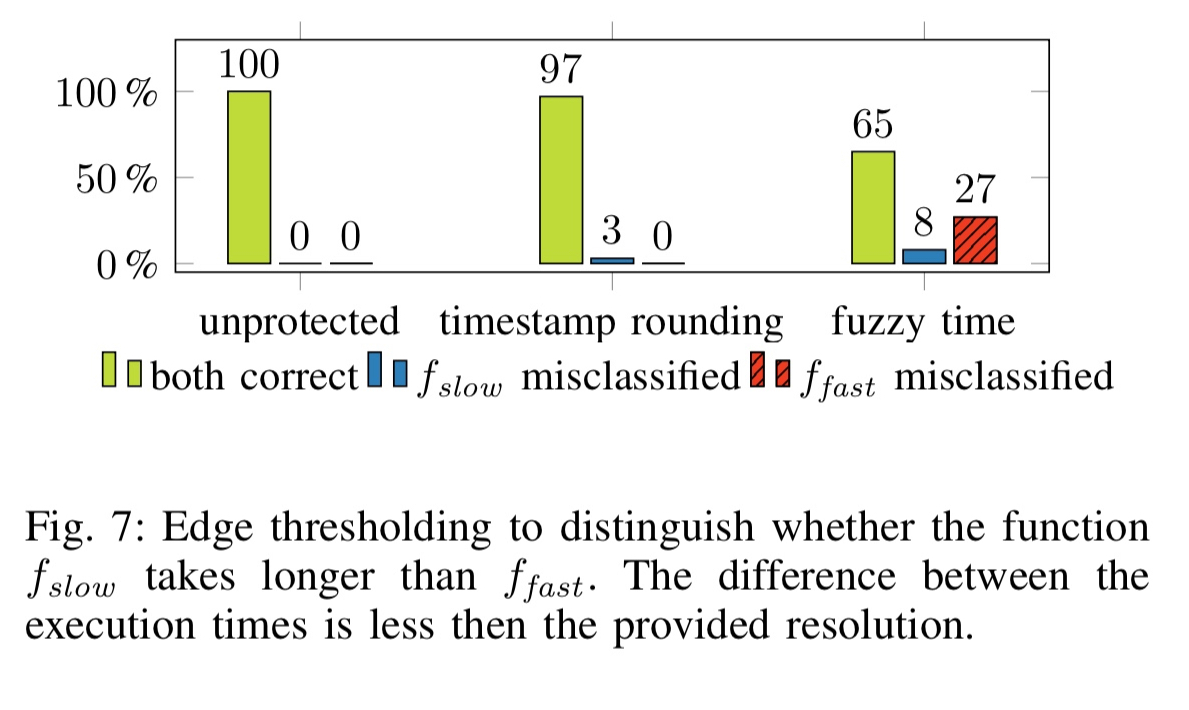

The following figure shows theses policies at work against an attacker trying to distinguish between fast and slow versions of a function.

With the low-resolution timestamp and edge-thresholding, the functions are correctly distinguished in 97% of cases… when fuzzy time is enable, the functions are correctly distinguished in only 65% of the cases, and worse, in 27% of the cases the functions are wrongly classified.

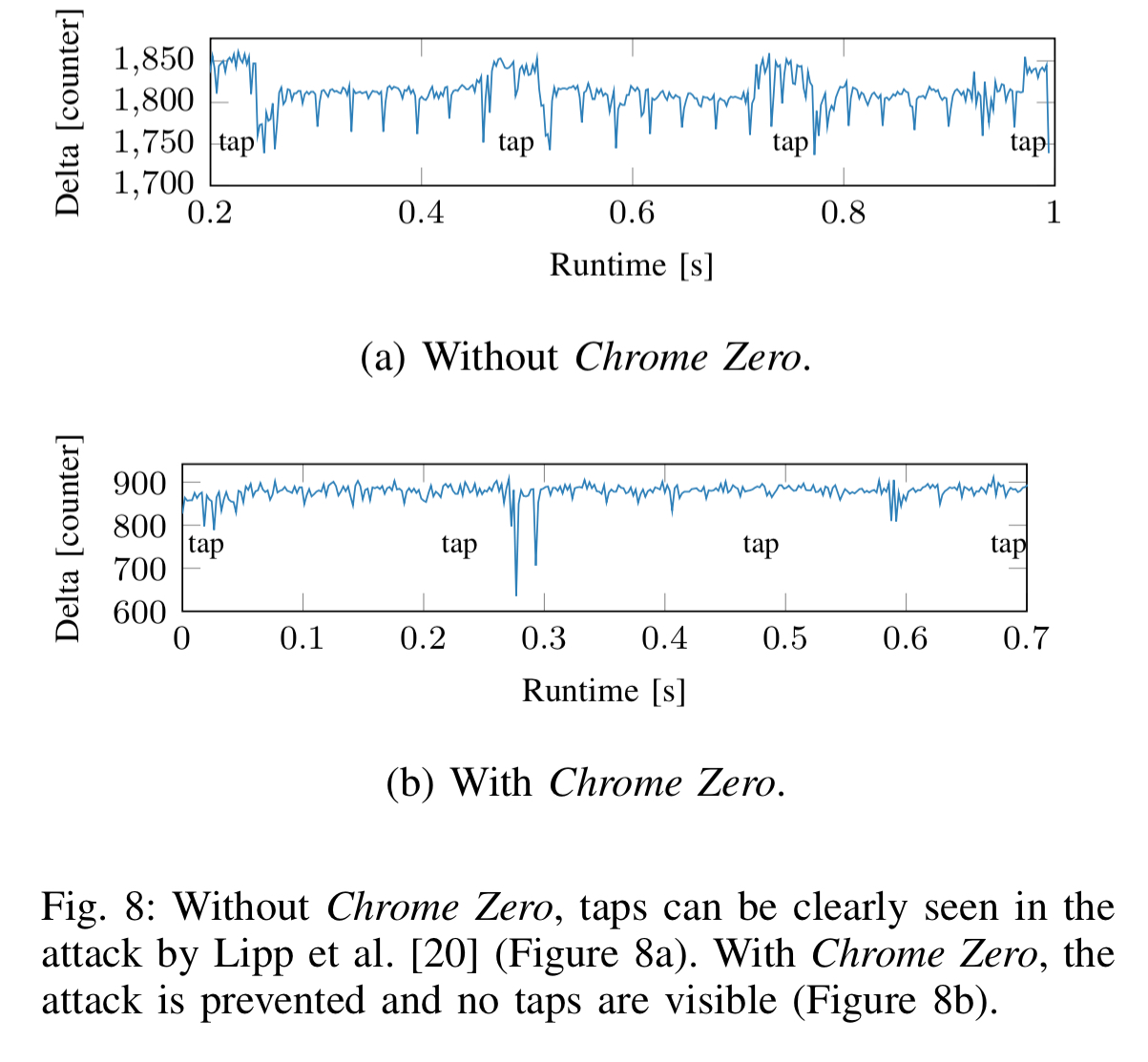

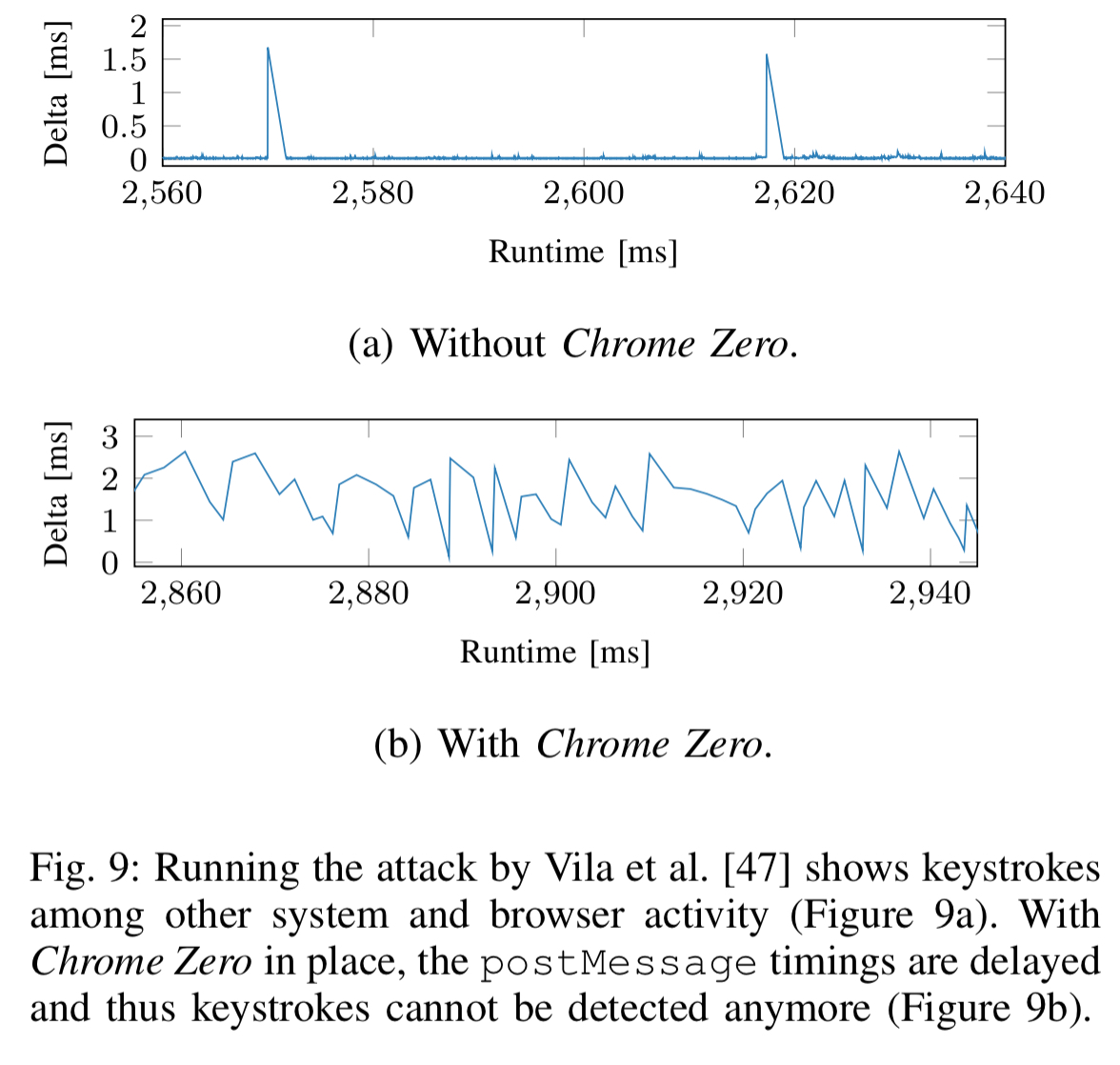

This is more than enough to defeat the JavaScript keystroke detection attack of Lipp et al.:

Multithreading

The support for parallelism afforded by web workers provides new side-channel attack possibilities, by measuring the dispatch time of the event queue. For example, an endless loop running within a web worker can detect CPU interrupts, which can then be used to deduce keystroke information.

A drastic but effective Chrome Zero policy is to prevent real parallelism by replacing web workers with a polyfill intended for unsupported browsers that simulates web workers on the main thread. An less drastic policy is to delay the postMessage function with random delays similar to fuzzy timing. This is sufficient to defeat keystroke detection attacks:

Shared data

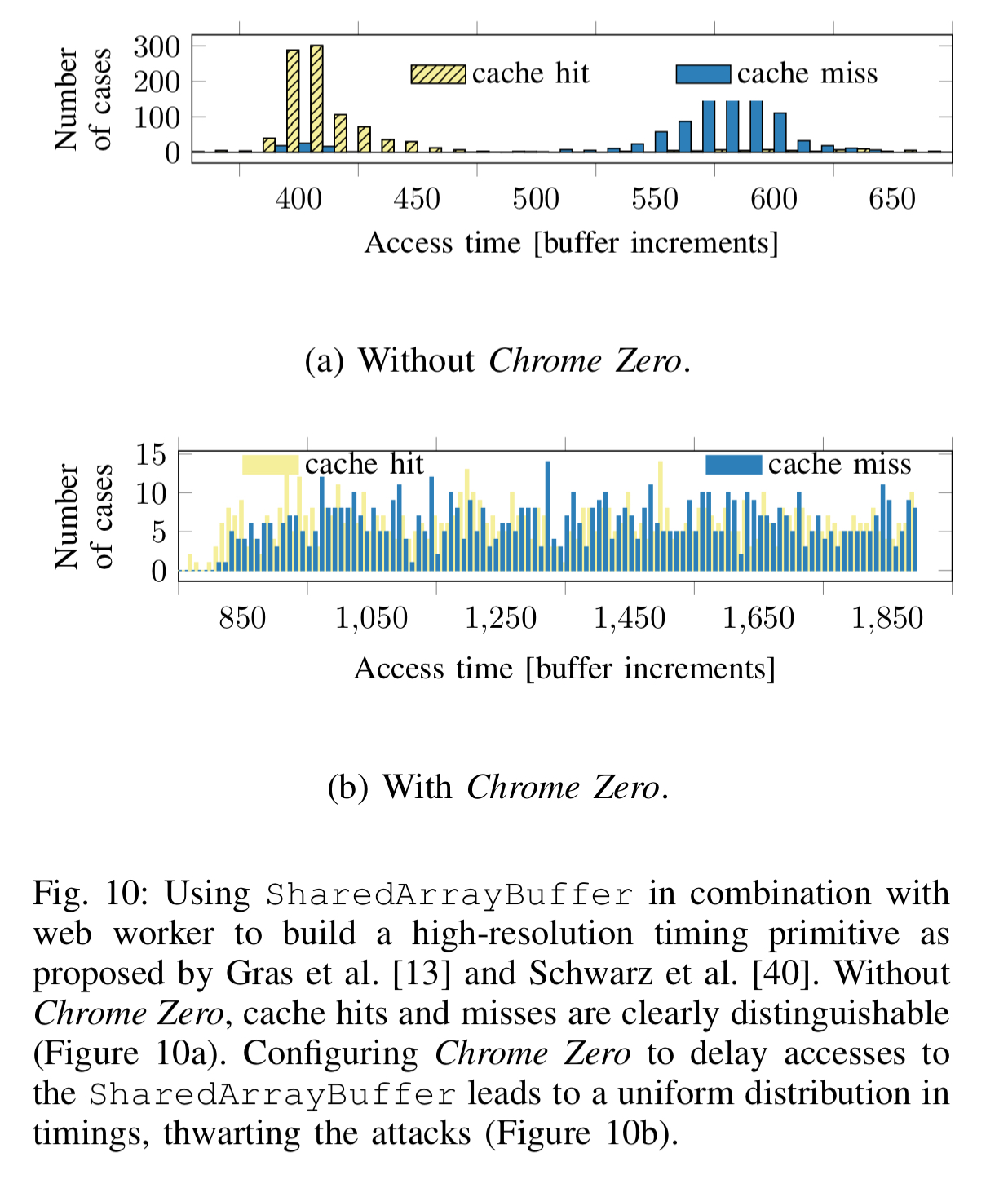

JavaScript’s SharedArrayBuffer behaves like a normal ArrayBuffer, but can be simultaneously accessed by multiple workers. This shared data can be exploited to build timing primitives with a nanosecond resolution.

One simple Chrome Zero policy is to disallow use of SharedArrayBuffers (which is deactivated by default in modern browsers anyway at the moment). An alternative policy is to add random delays to accesses of shared buffers. This is enough to prevent the high-resolution timing needed by attacks:

Sensor API

Some sensors are already covered by browser’s existing permission systems, but several sensors are not:

Mehrnezhad et al., showed that access to the motion and orientation sensor can compromise security. By recording the data from these sensors, they were able to infer PINs and touch gestures of the user. Although not implemented in JavaScript, Spreitzer showed that access to the ambient light sensor can also be exploited to infer user PINs. Similarly, Olejnik utilized the Ambient Light Sensor API to recover information on the user’s browsing history, to violate the same-origin policy, and to steal cross-origin data.

The battery status API can also be used to enable a tracking identifier. In Chrome Zero the battery interface can be set to return randomized or fixed values, or disable entirely. Likewise Chrome Zero can return either a fixed value or disable the ambient light sensor API. For motion and orientation sensors data can be spoofed, or access prohibited entirely.

Evaluation

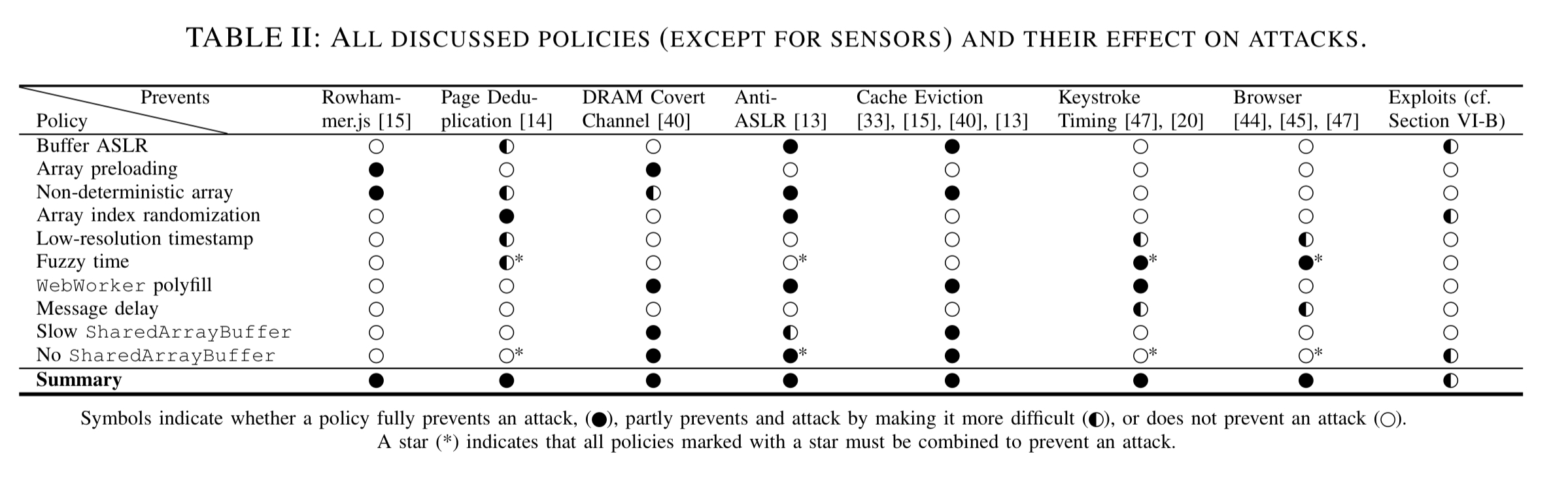

We’ve already seen a number of examples of Chrome Zero preventing attacks. The following table summarises the policies and their effect on attacks:

(Enlarge)

Back-testing on all 12 CVEs discovered since 2016 for Chrome 49 or later reveals that half (6) of them are prevented. Creating policies to specifically target CVEs was not a goal of the current research.

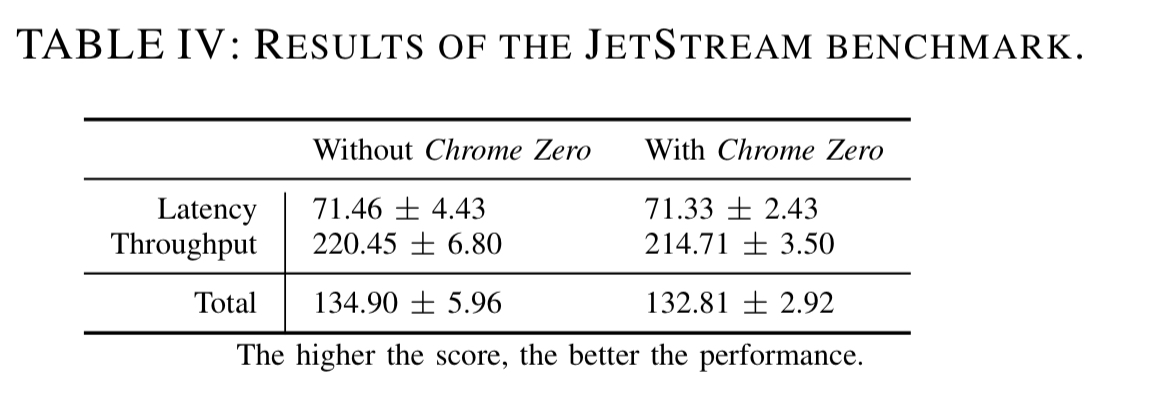

The performance overhead of Chrome Zero was evaluated on the Alexa Top 10 websites, and correct functioning of sites was evaluated for the Alexa Top 25. Page load times (we’re not told what metric is actually measured) increase from 10.64ms on average, to 89.08ms when all policies are enabled — the overhead is proportional to the number of policies in force. On the JetStream browser benchmark, Chrome Zero shows a performance overhead of 1.54%.

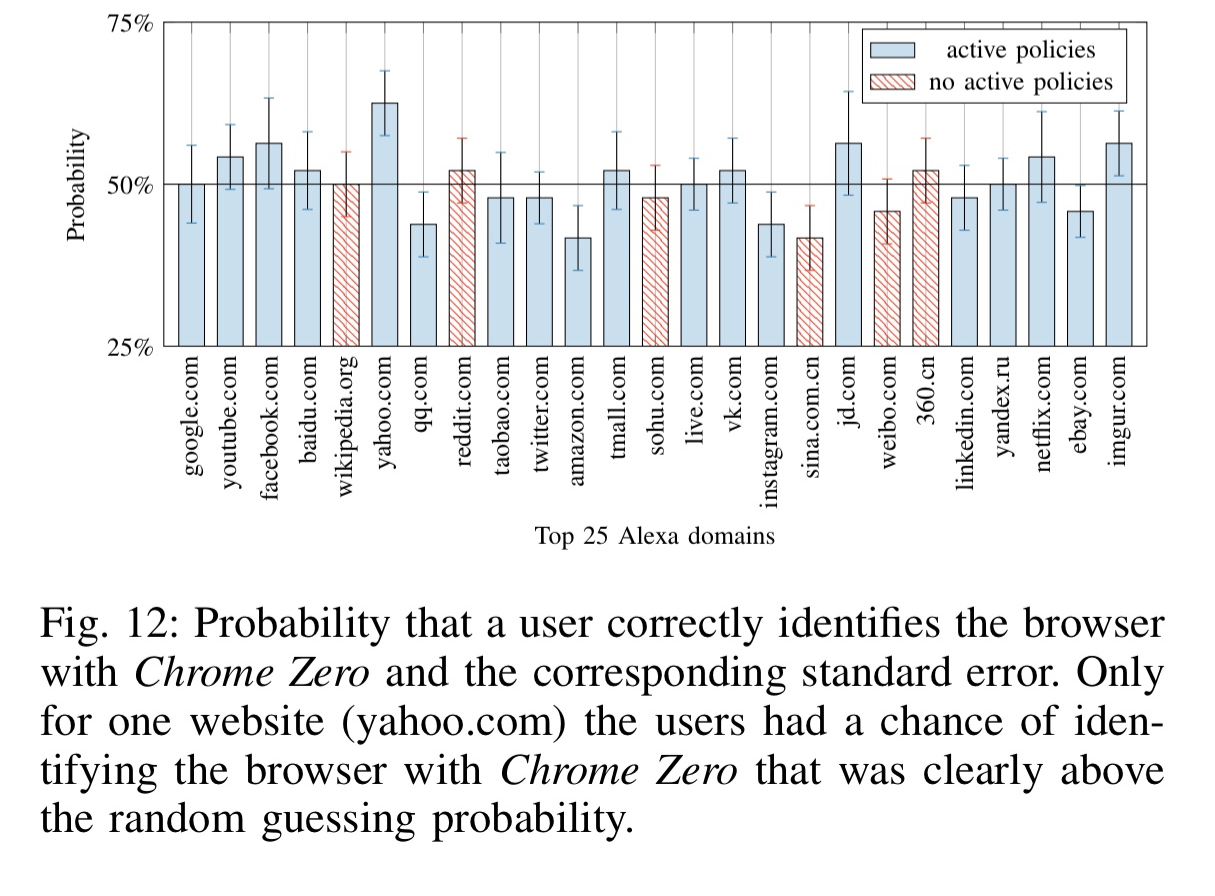

Depending on what an application actually does with arrays, web workers etc., I would expect the impact to be significantly greater in some circumstances. However, in a double-blind user study with 24 participants, the participants were unable to tell whether they were using a browser with Chrome Zero enable or not – apart from on the yahoo.com site.

Our work shows that transparent low-overhead defenses against JavaScript-based state-of-the-are microarchitectural attacks and side-channel attacks are practical.