Why do Record/Replay Tests of Web Applications Break? – Hammoudi et al. ICST ’16

Your web application regression tests created using record/replay tools are fragile and keep breaking. Hammoudi et al. set out to find out why. If we knew that, perhaps we could design mechanisms to automatically repair broken tests, or to build more robust tests. The authors look at 300 different versions of five open source web applications, creating test suites for their initial versions using Selenium IDE and then following the evolution of the projects. When a test broke in a given version, it was repaired so that the process could continue. At the end of this process, data had been gathered on 722 individual test breakages.

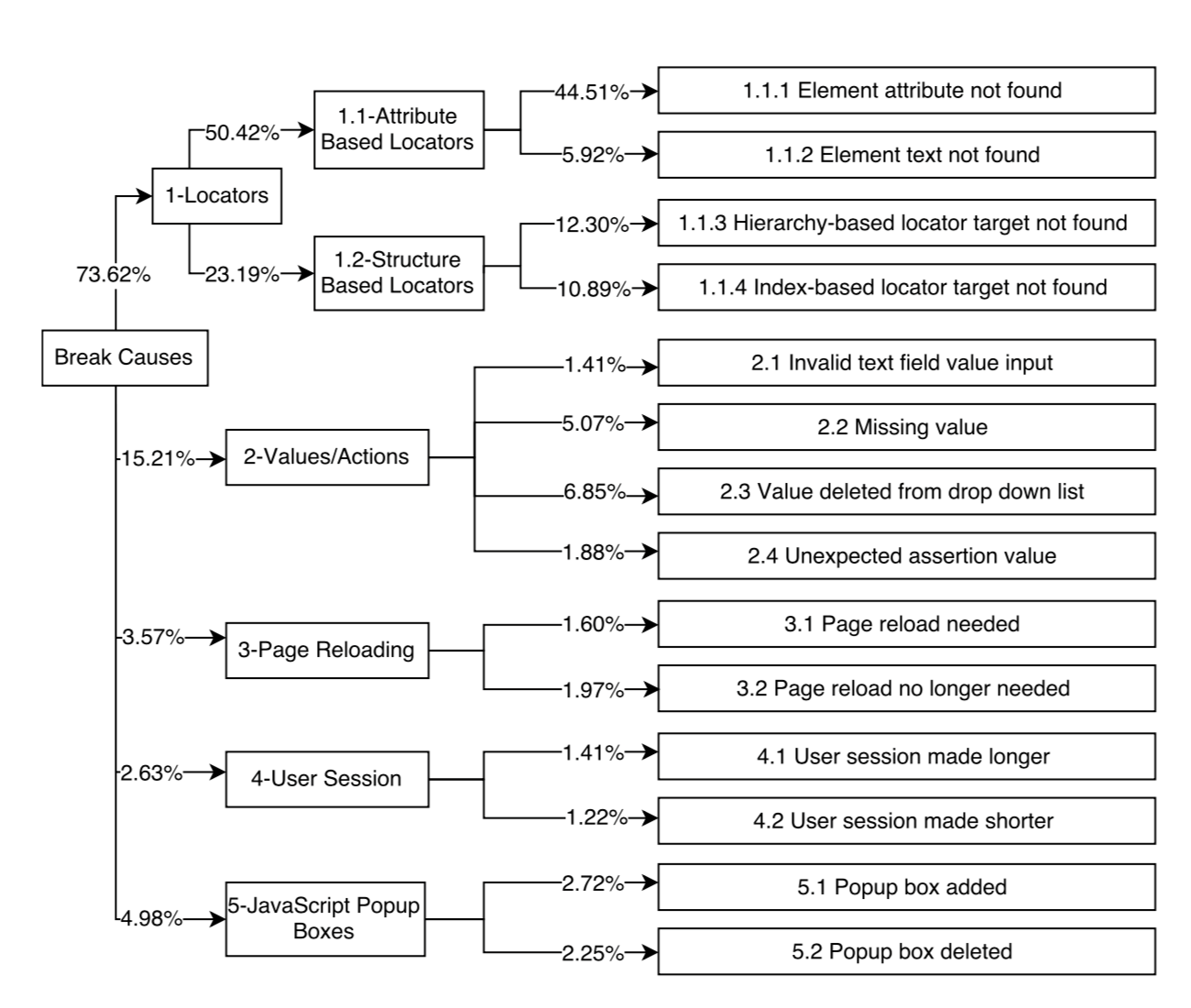

Using the data we gathered, we developed a taxonomy of the causes of test breakages that categorizes all of the breakages observed on the applications we studied. We then gathered 153 versions of three additional web applications and applied the foregoing process to them as well; this yielded data on 343 additional test breakages. We analyzed these in light of our taxonomy and were able to accommodate all of them without further changing the taxonomy; this provides evidence that our taxonomy may be more generally applicable.

Hammoudi et al. had to create their own tests since, “In searching for web applications, we discovered that few open-source applications are provided with capture-replay test suites; in fact, few are provided with any test suites at all….”

This will be a shorter paper write-up than usual. What you really need to know is your tests are breaking because the information used to locate page elements keeps breaking. After collating and clustering all of the breakages across the web tests, the authors create a taxonomy with 5 high level causes of test breakages:

- Causes related to locators used in tests

- Causes related to values and actions used in tests

- Causes related to page reloading

- Causes related to changes in user sessions times

- Causes related to popup boxes.

Locators are used by JavaScript and other languages, and by record/replay tools, to identify and manipulate elements. We identify two classes of locators, the second of which is composed of two sub-classes…

Attribute-based locators use element attributes such as element ids and names. Structure-based locators rely on the structure of a web page and may locate elements via a hierarchy (e.g. xpaths or CSS selectors) or via an index in the case of multiple otherwise identical elements.

Over 70% of all breakages are due to locator fragility in the face of change, and over 50% of all breakages are further due to attribute-based locators.

Note that we cannot conclude from this that element attribute based location is inferior to other forms of location – even though it is responsible for most breakages – since we don’t know the base rate of usage of the different location strategies. My personal suspicion is that attribute-based location is one of the more robust strategies. In terms of giving guidance to practitioners seeking to write more robust tests, it would be really nice to see this additional level of analysis.

Our data suggests which categories of test breakages merit the greatest attention. Locators caused over 73% of the test breakages we observed, and attribute-based locators caused the majority of these. Clearly, addressing just this class of errors by finding ways to repair them if they break would have the largest overall impact on the reusability of tests across releases. The data also suggest where subsequent priorities should be placed in terms of finding methods for repairing test breakages.